Guardly

A Scam-detection and education feature embedded within LinkedIn

INTRODUCTION

Guardly is a UX-led product developed during a 48-hour designathon, exploring how moment-based interventions can support job seekers at the point where scams are most likely to occur.

MY ROLE

I kept everyone aligned under tight time constraints by prioritising work and coordinating parallel workstreams. I contribute to the UX research and problem-definition phase, including framing the core challenge, sourcing supporting data, and shaping user interview questions.

I also owned the product narrative and final presentation to the judging panel, defining the story and communicating Guardly’s value and feasibility.

INTRODUCTION

Guardly is a UX-led product developed during a 48-hour designathon, exploring how moment-based interventions can support job seekers at the point where scams are most likely to occur.

[Project Scope]

Team of 5

[Role]

UX Designer - Product Manger

[Timeline]

48 hours designathon

View prototype

PROBLEM DISCOVERY

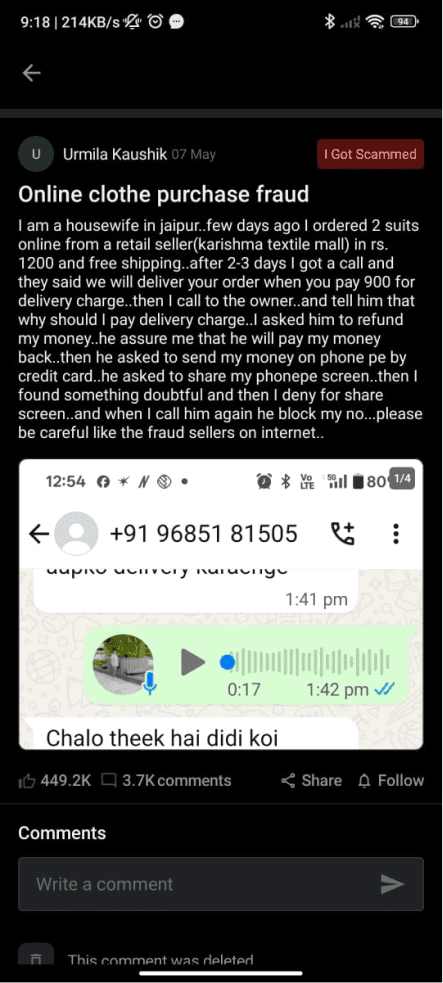

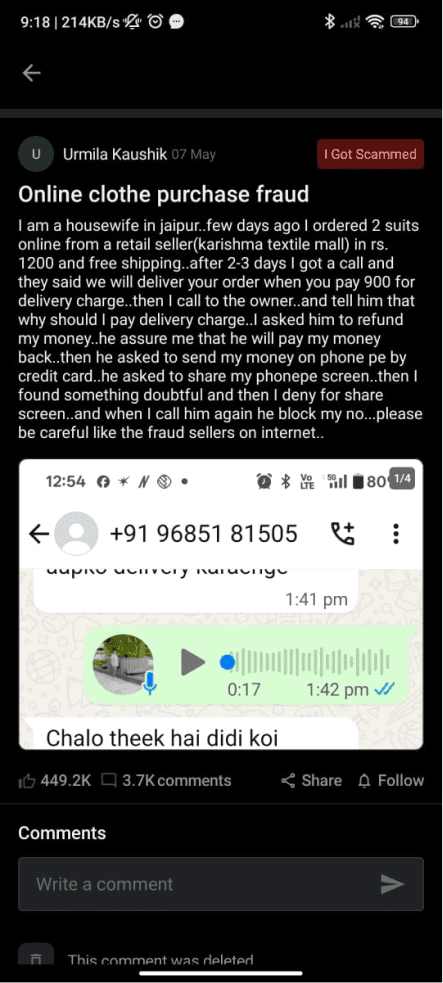

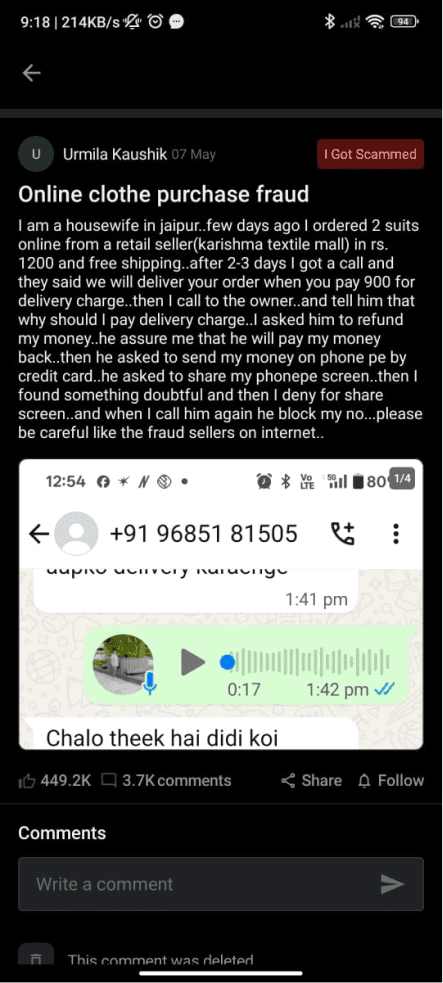

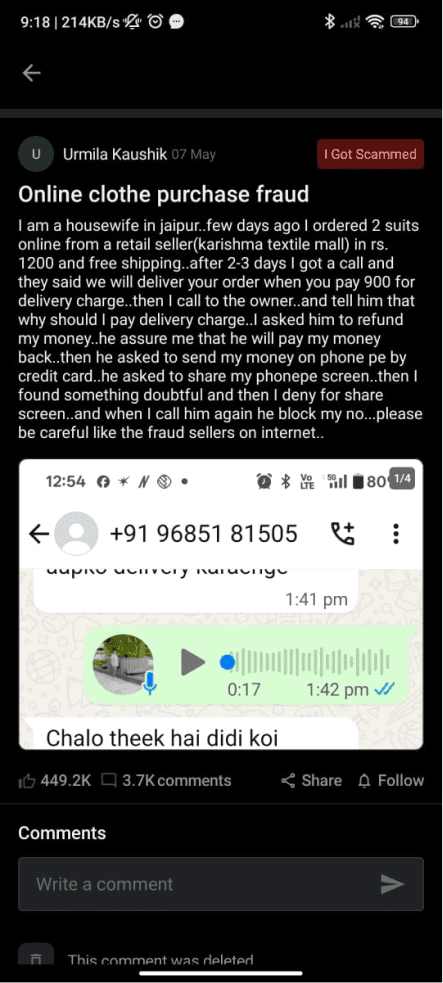

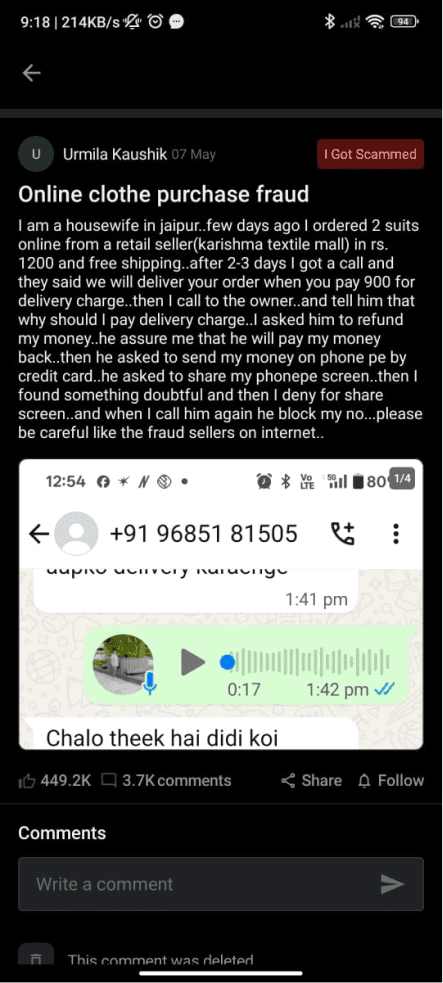

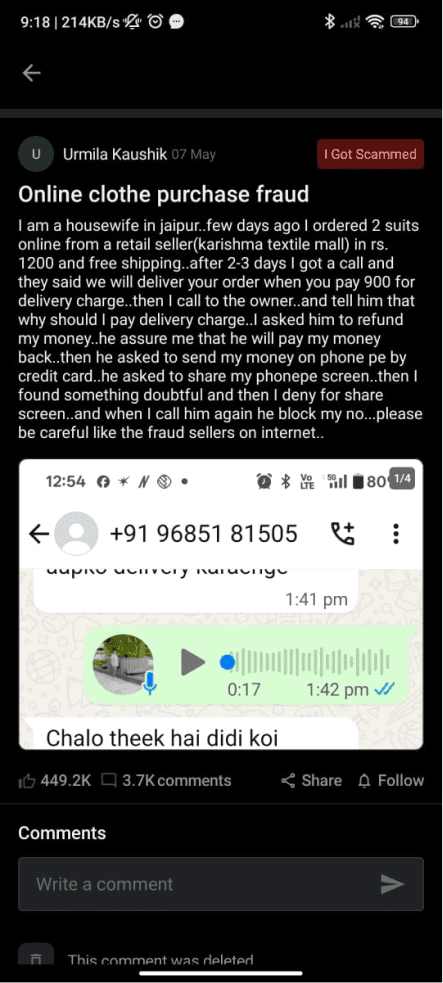

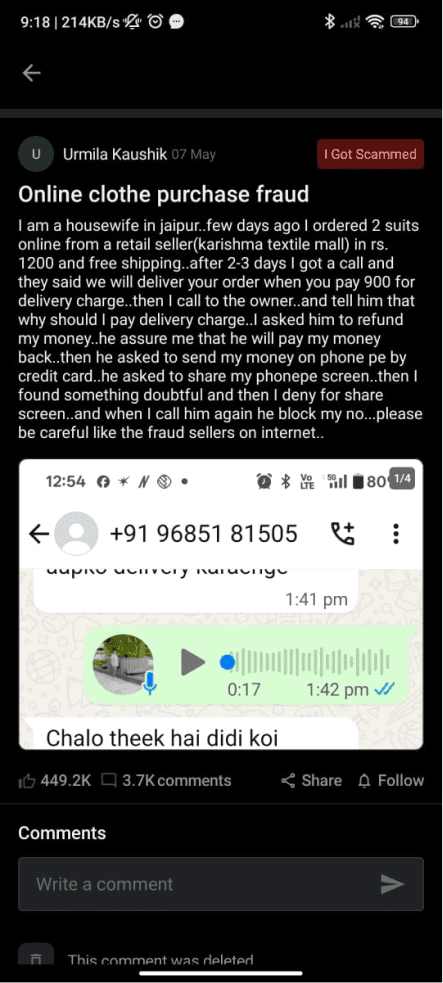

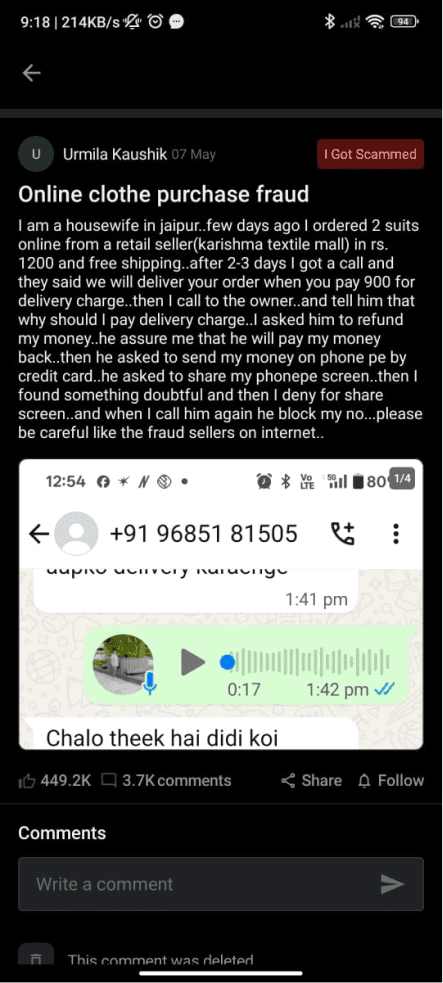

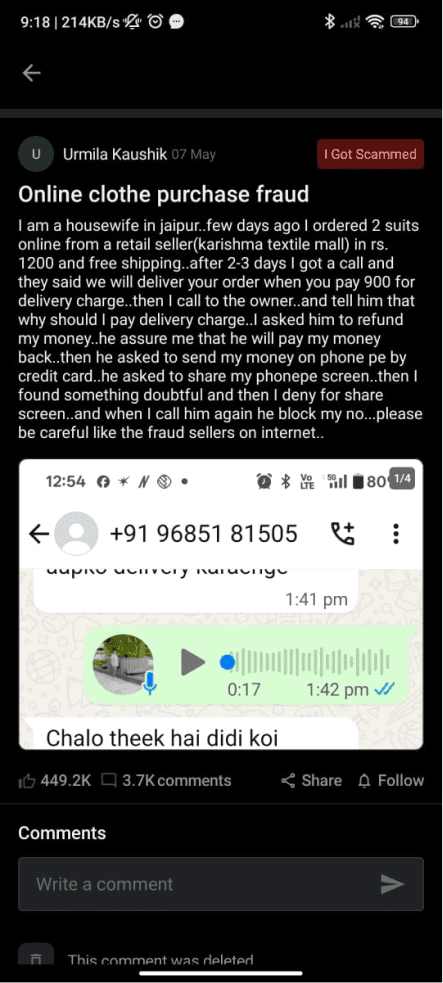

Job Scams look real and they catch people at their moment vunerable

Job scams no longer look suspicious. They look like real opportunities — complete with polished job listings, interviews, and official-looking offer letters. The danger often appears late in the process, during payroll onboarding, when job seekers are asked to share personal identification and bank details.

This makes scams especially effective during the job search, a time filled with urgency, hope, and emotional pressure. Many people are confident they won’t fall for scams until they find themselves caught in one.

This sparked a question -

“How might we empower users to confidently spot and report job scams so they can protect their personal information from online predators?”

PROBLEM DISCOVERY

Job Scams look real and they catch people at their moment vunerable

Job scams no longer look suspicious. They look like real opportunities — complete with polished job listings, interviews, and official-looking offer letters. The danger often appears late in the process, during payroll onboarding, when job seekers are asked to share personal identification and bank details.

This makes scams especially effective during the job search, a time filled with urgency, hope, and emotional pressure. Many people are confident they won’t fall for scams until they find themselves caught in one. This sparked a question -

“How might we empower users to confidently spot and report job scams so they can protect their personal information from online predators?”

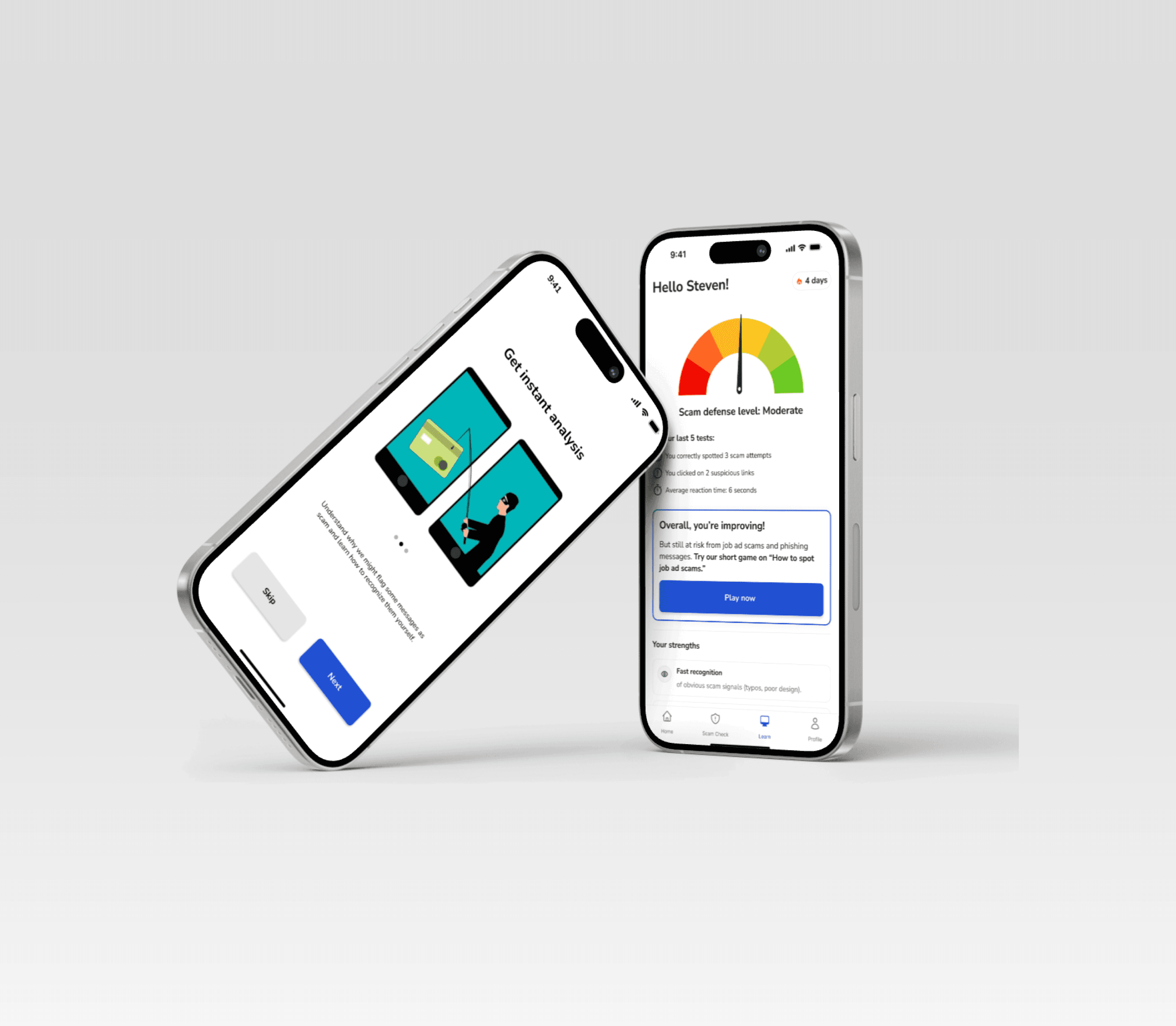

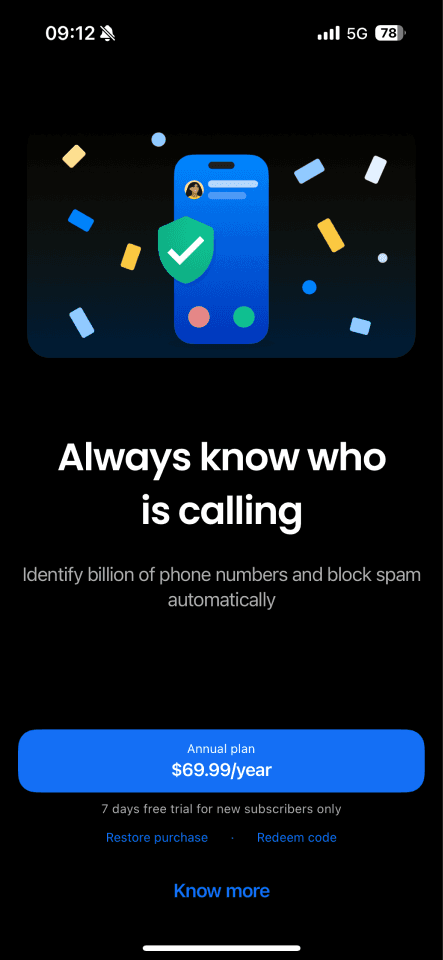

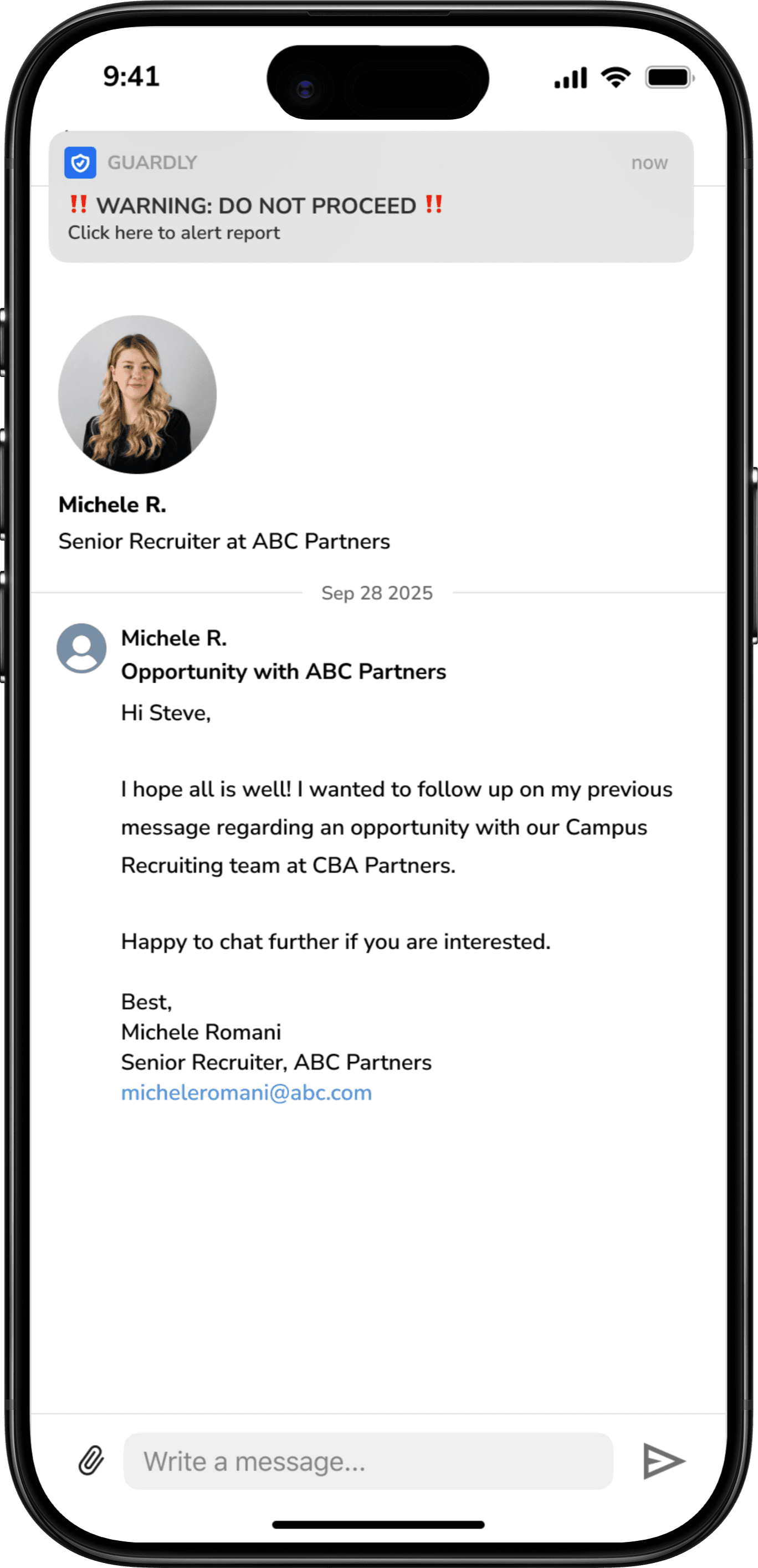

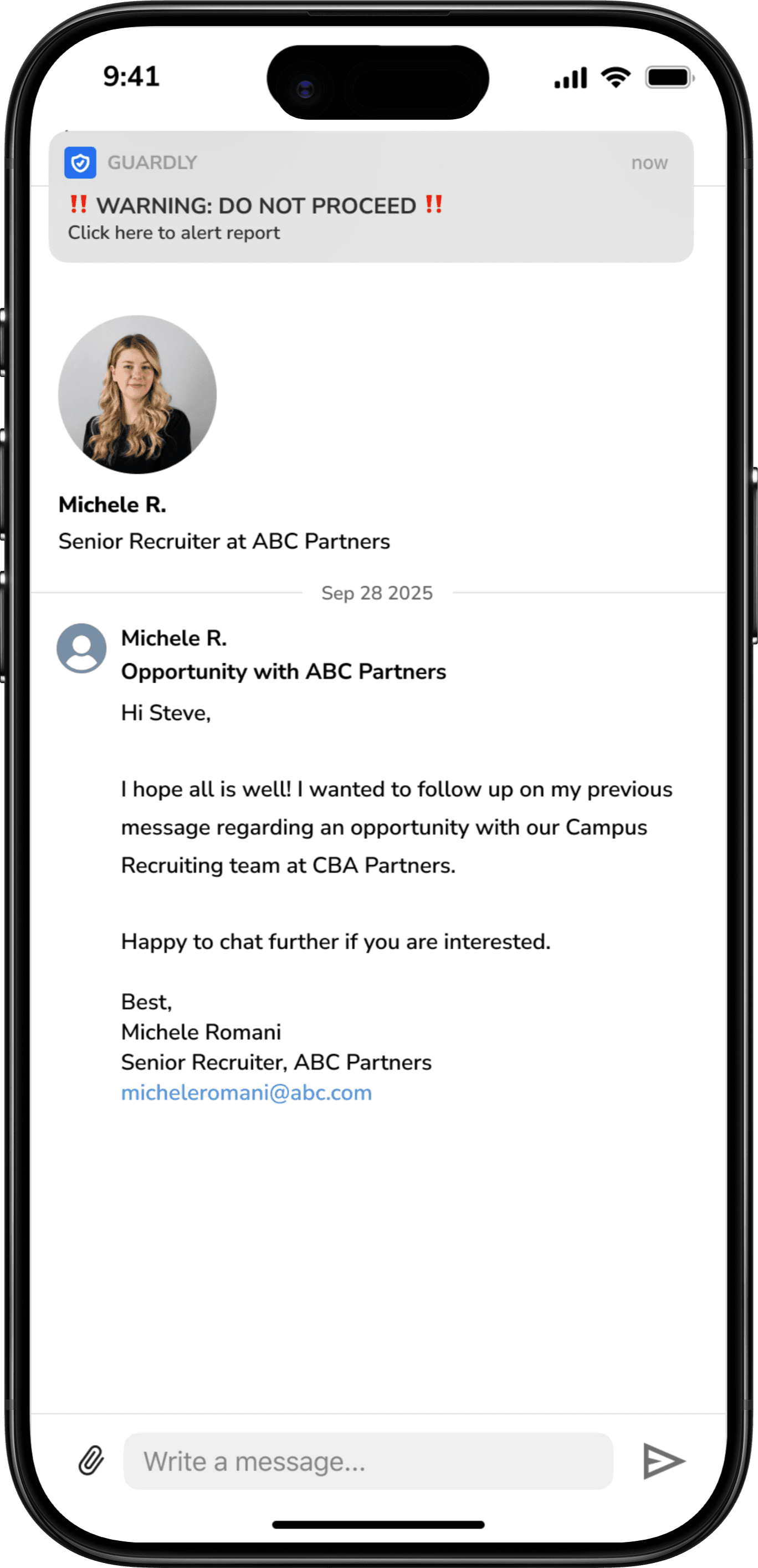

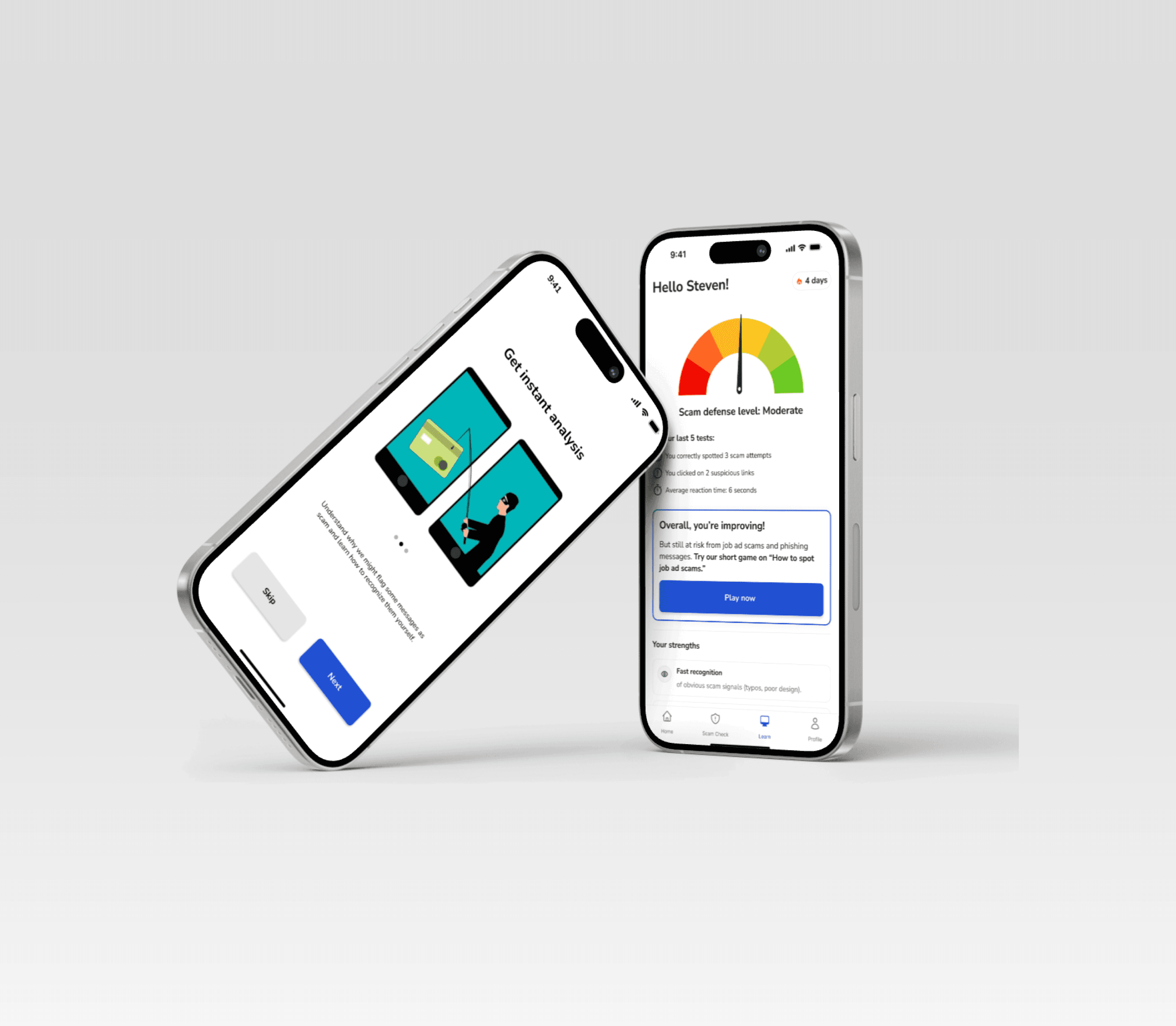

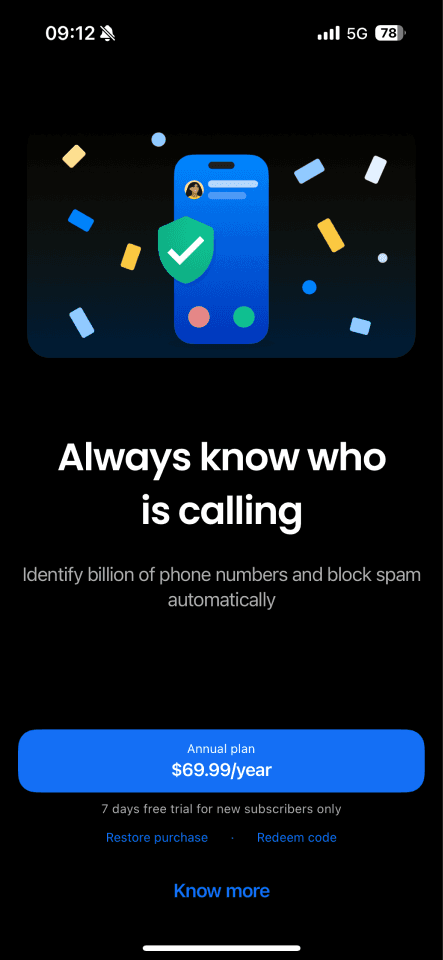

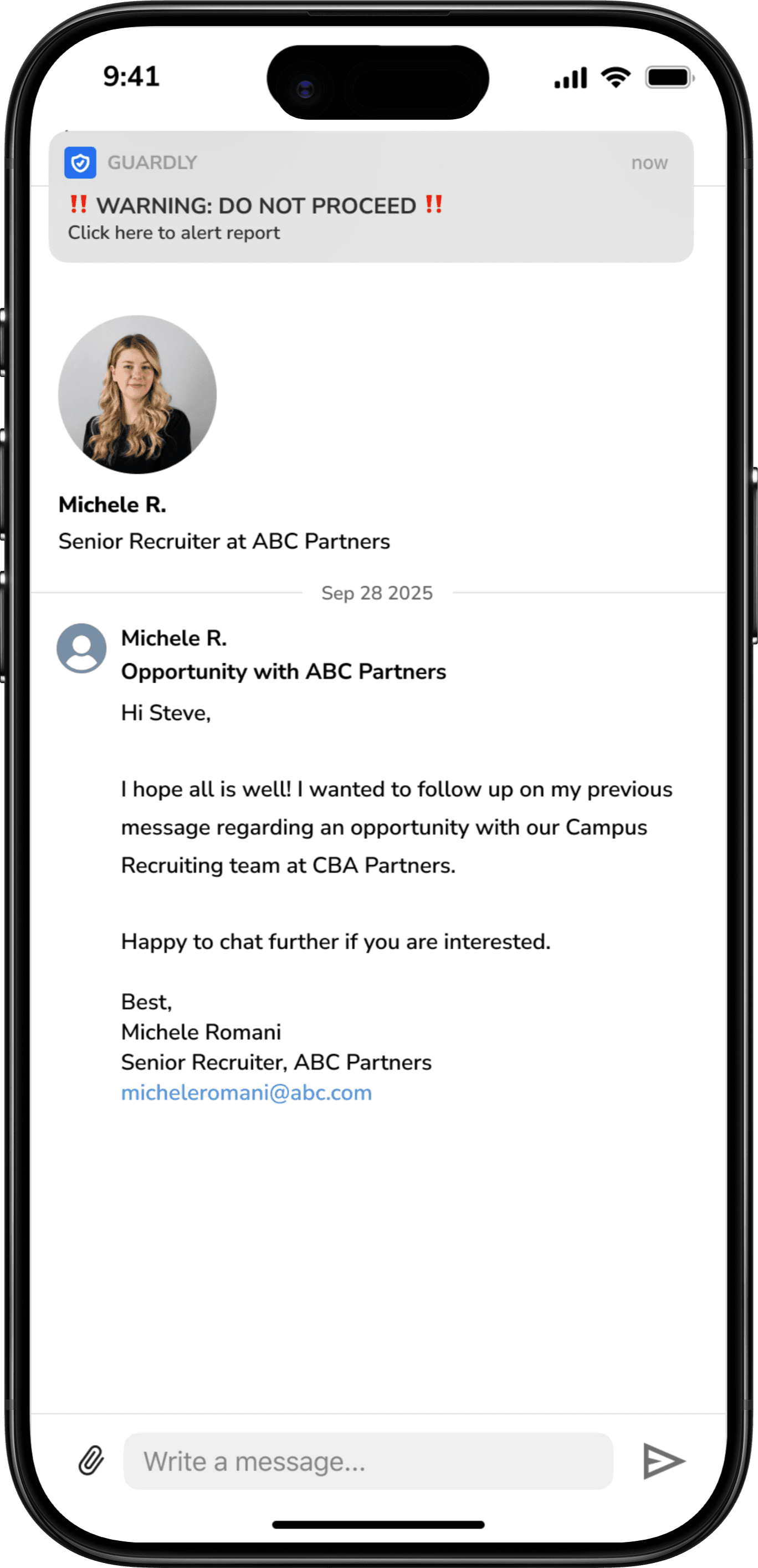

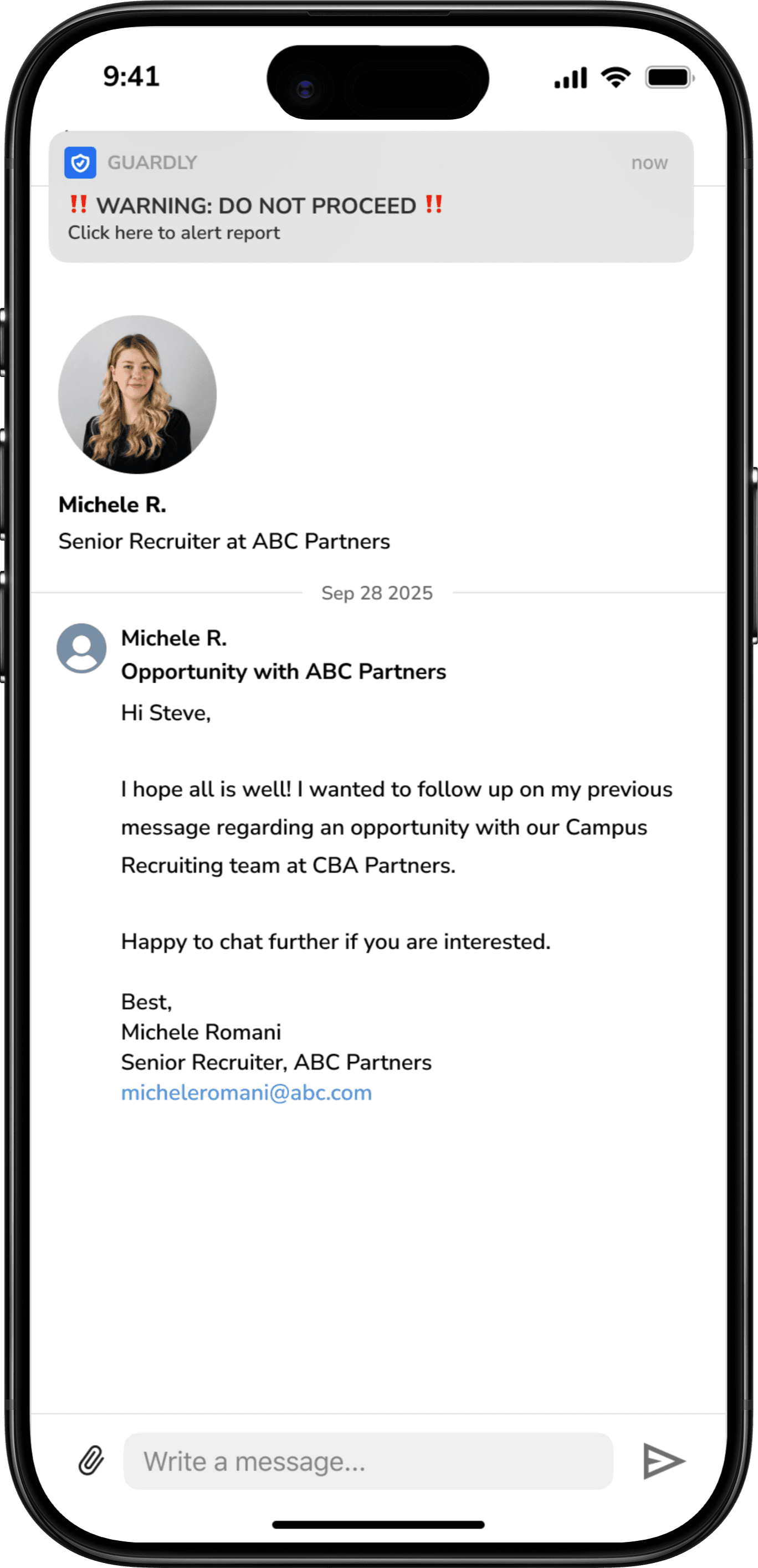

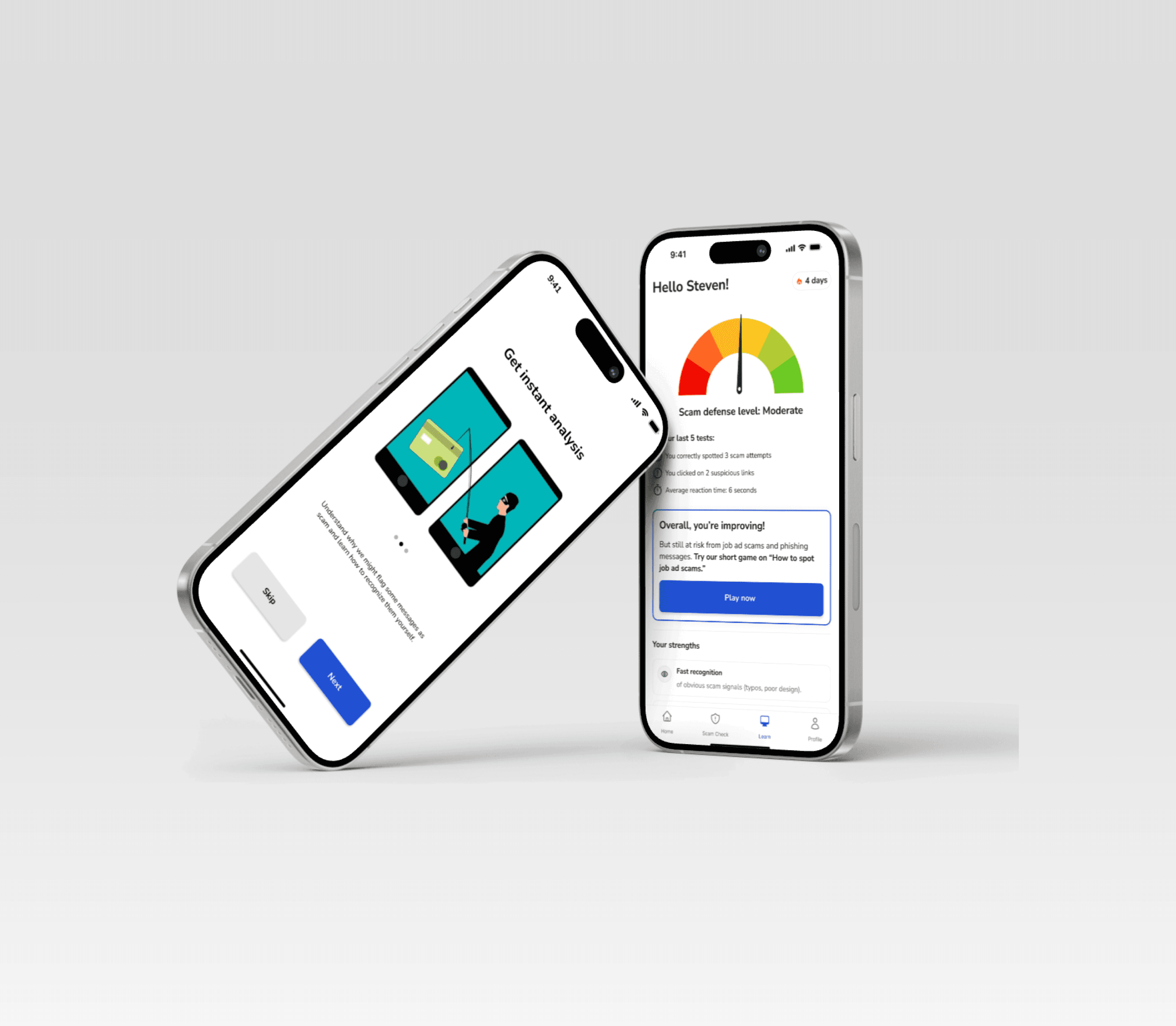

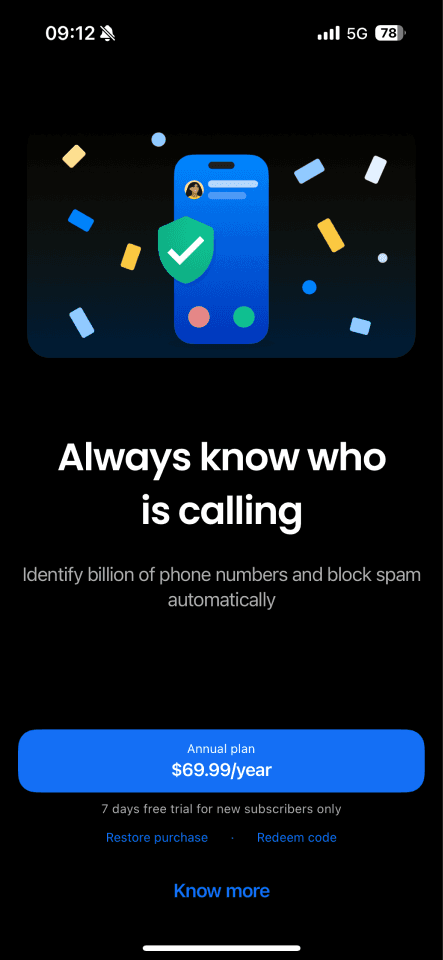

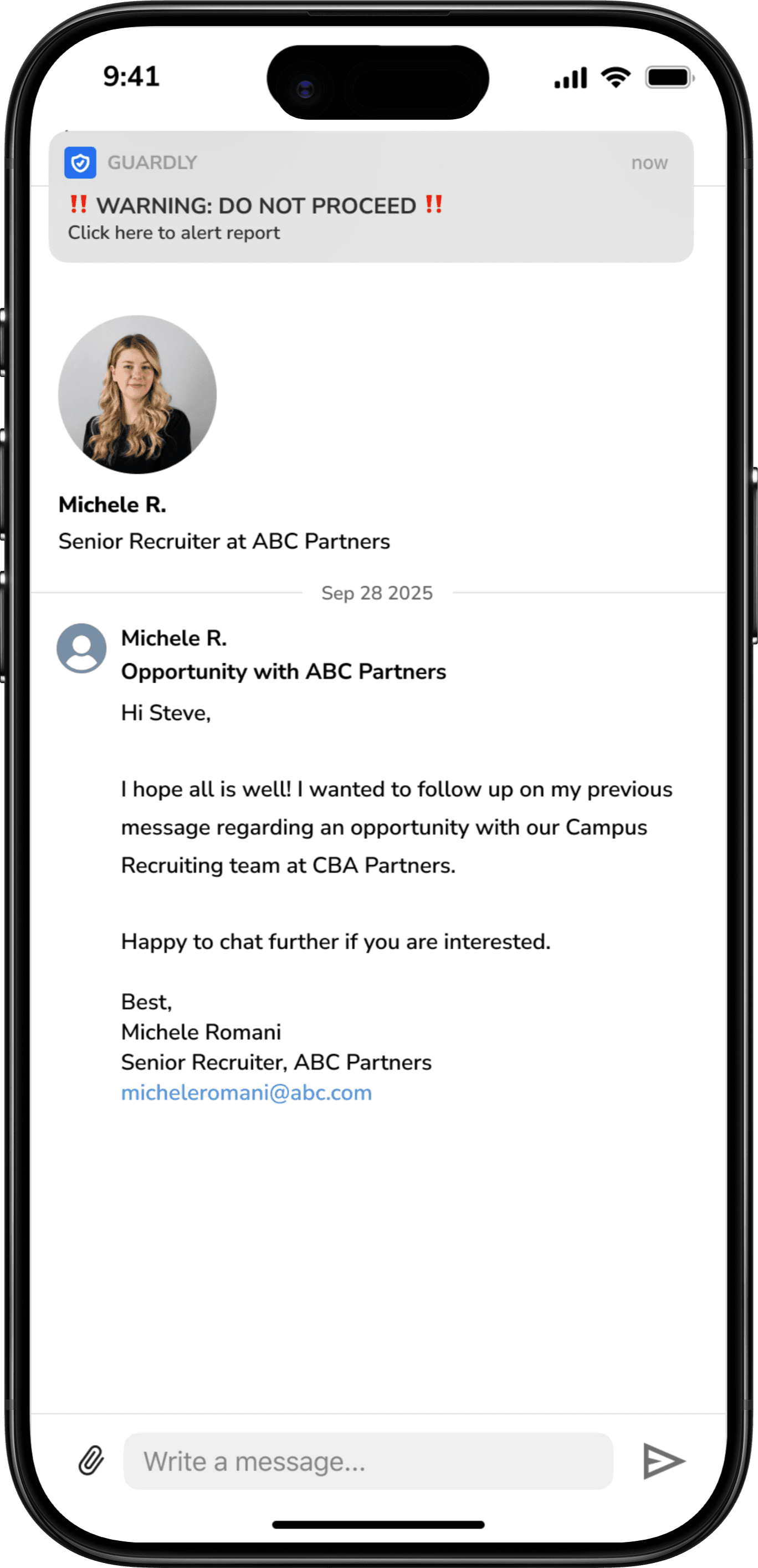

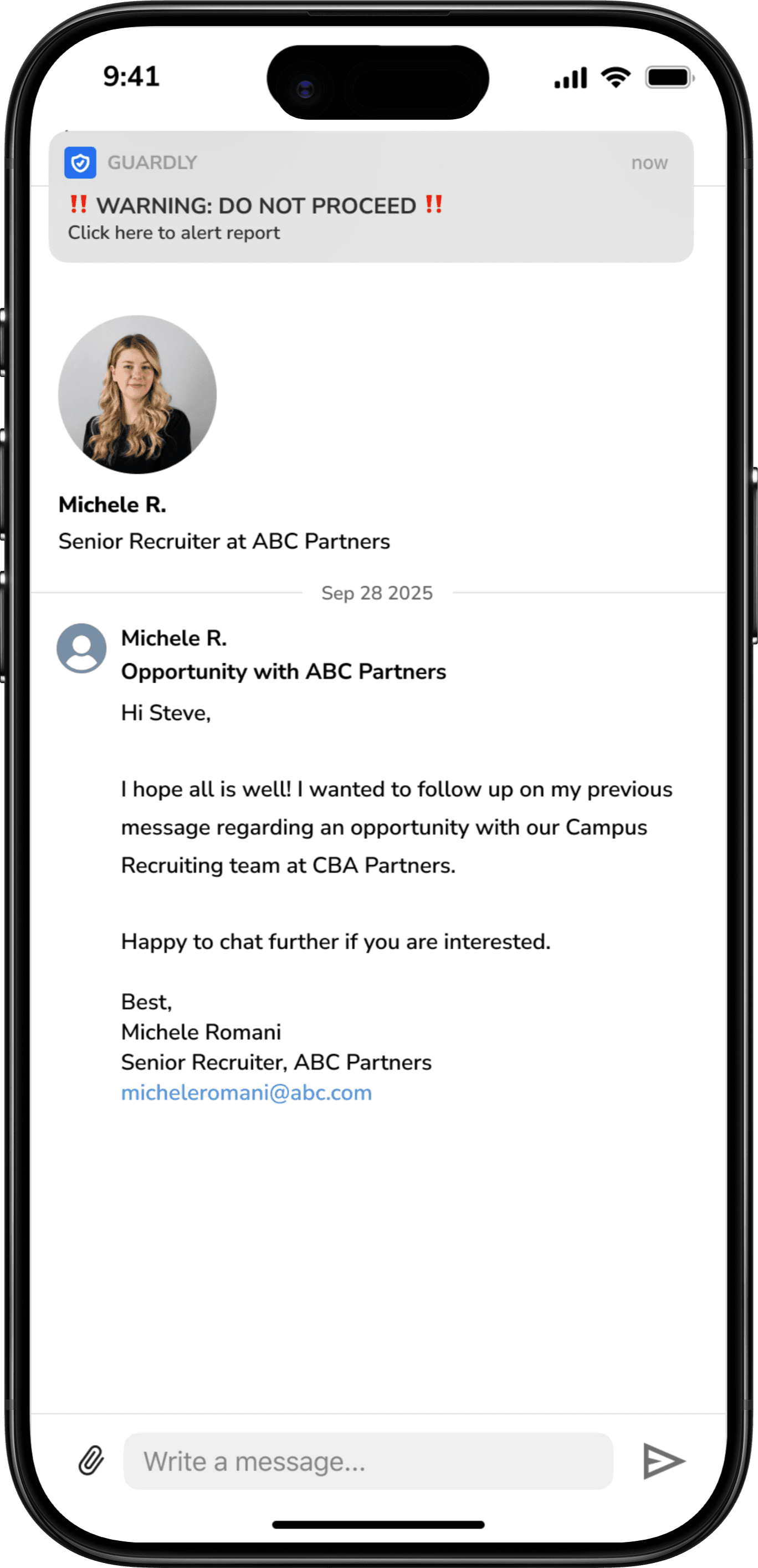

Our Solution - Guardly

A moment-based scam detection and education experience for job seekers

Timely warning and clear guidance right when it matters most

Stays out of the way — Once connected with Linkedin, it runs quietly in the background while users browse jobs and messages.

Steps in when risk is highest — surfacing calm warnings only when suspicious patterns appear.

Helps users make sense of the risk — breaking down what feels off so users can decide what to do next with confidence.

Empowers users to take action — Report suspected scams and always closing the loop with clear follow-up, so reporting never feels pointless.

The Process

STARTING POINT

With 48 hours on the clock, we did a deep dive into the world of online scams — a space that turned out to be far more complex, emotional, and human than we expected.

While the brief broadly explored online safety, early research revealed many forms of digital harm. We chose to narrow our focus to job scams, where financial loss and emotional distress are often inflicted during the urgency, hope, and pressure of the job search.

DISCOVERING THE PROBLEM SPACE

In Australia alone, $13.5 million was lost to job scams in 2025, often during moments of urgency, hope, and emotional vulnerability.

Our research revealed that most existing scam prevention relies on policy, broad education, or platform-level signals such as labels and warnings. These interventions often live outside the moment of decision — appearing in classrooms, public campaigns, or after harm has already occurred — rather than when someone is about to click, reply, or share personal details.

We also found that heavy-handed regulation or platform bans can trigger resistance or feelings of censorship, especially when users don’t understand why something is being blocked. Even when warnings are present, they are often passive and non-actionable, leaving users unsure of what to do next.

In fast, emotionally charged online environments, urgency, optimism, and cognitive overload make it difficult for people to slow down and assess risk — precisely at the moments when caution matters most.

TALKING TO OUR USERS

Interviews with 25 young graduates revealed a pattern of overconfidence and vulnerability during scam encounters.

To dig deeper into these patterns, we conducted semi-structured interviews with 25 young graduates and early-career participants. We synthesised responses using an affinity diagram to cluster our findings.

[01] Overconfidence increases vulnerability

Many users, particularly younger job seekers, believed they were unlikely to fall for scams because they “grew up with the internet.” Despite this confidence, all interview participants are scam victims or knew someone who had been scammed.

[02] Intervention is often too late

Most existing solutions focus on education, policy, or content labeling, which typically occur before or after a risky interaction — not at the moment a user is deciding whether to engage. Cognitive overload and emotional decision-making mean critical thinking is often bypassed

[03] Job Scams are often the most legitimate looking

Unlike obvious scams, job-related scams often mirror real recruitment processes. Interviews, offer letters, and professional language build trust. The scam typically reveals itself during onboarding or payroll setup, when sensitive information is requested

[04] Scams are ignored, not reported

Reporting scams often felt pointless — once a report was submitted, users received no feedback or visibility into what happened next. Over time, this lack of transparency discouraged action, allowing scams to be ignored and repeated rather than actively addressed.

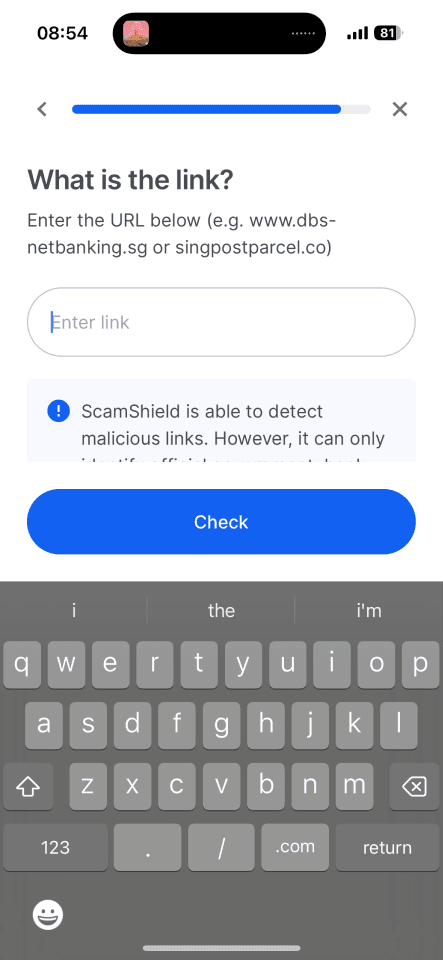

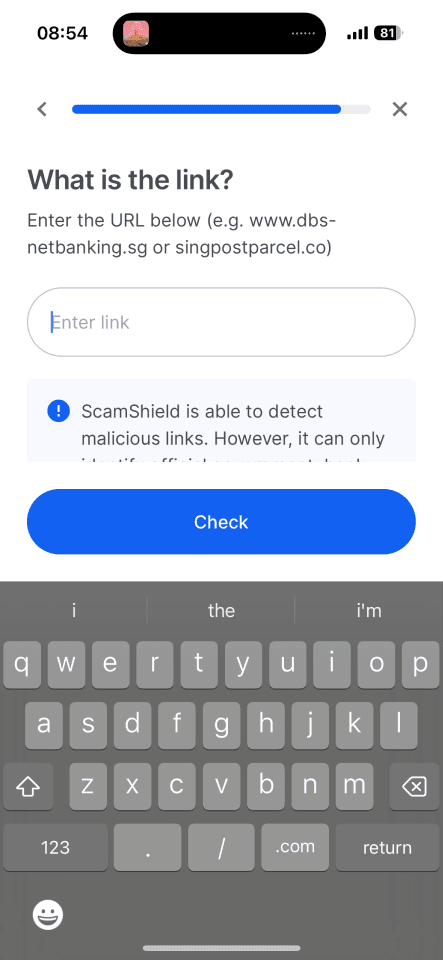

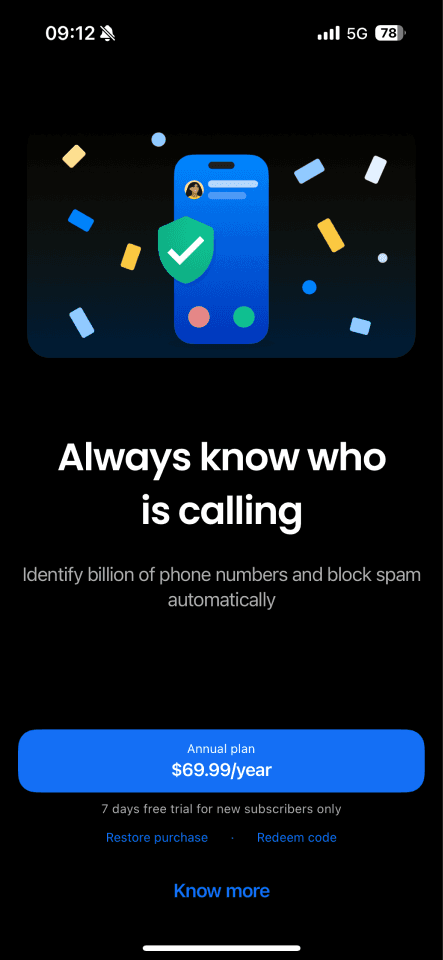

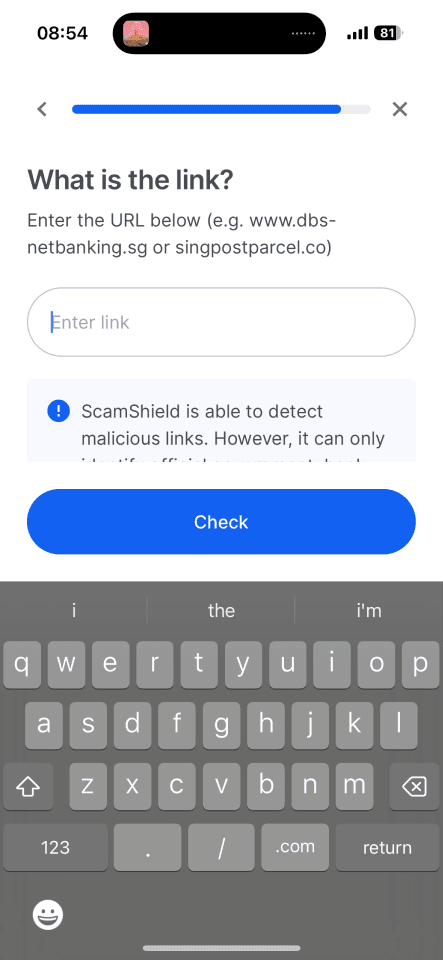

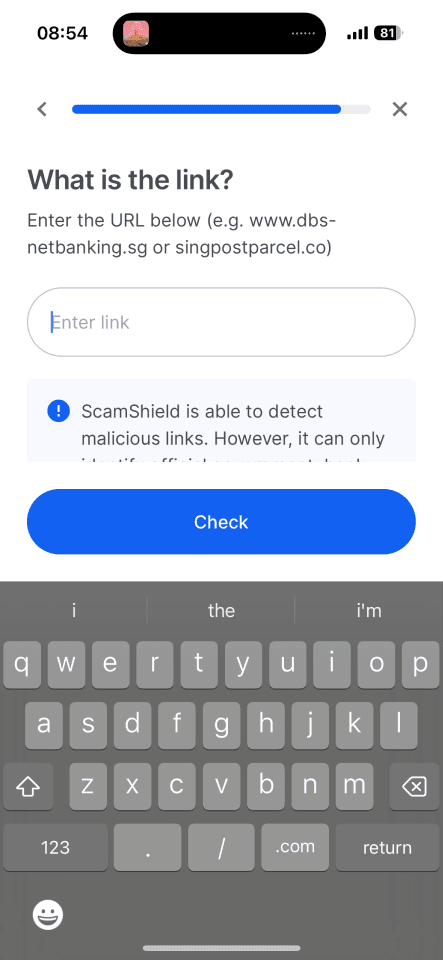

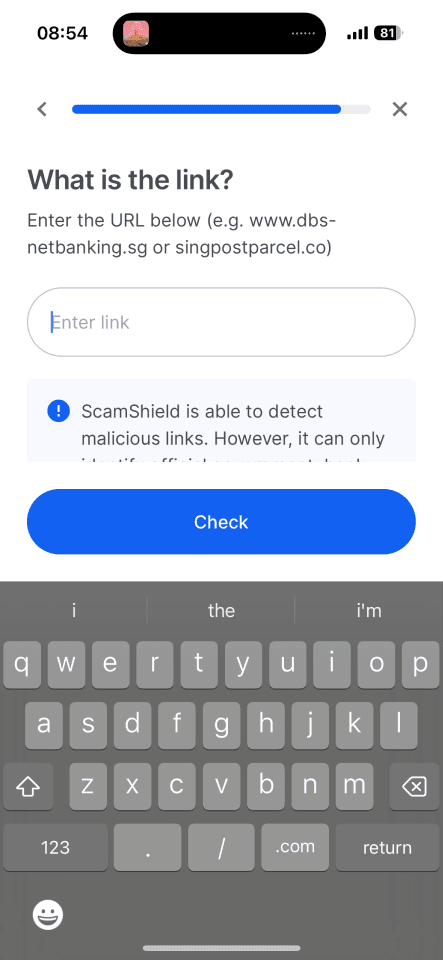

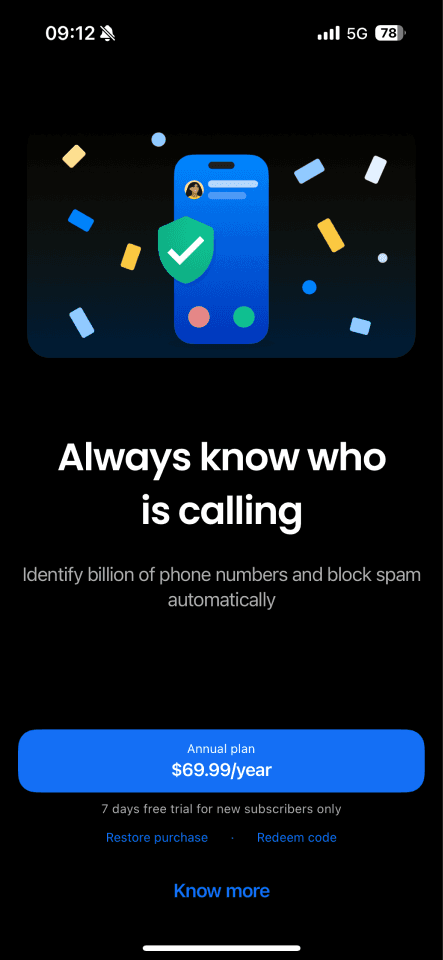

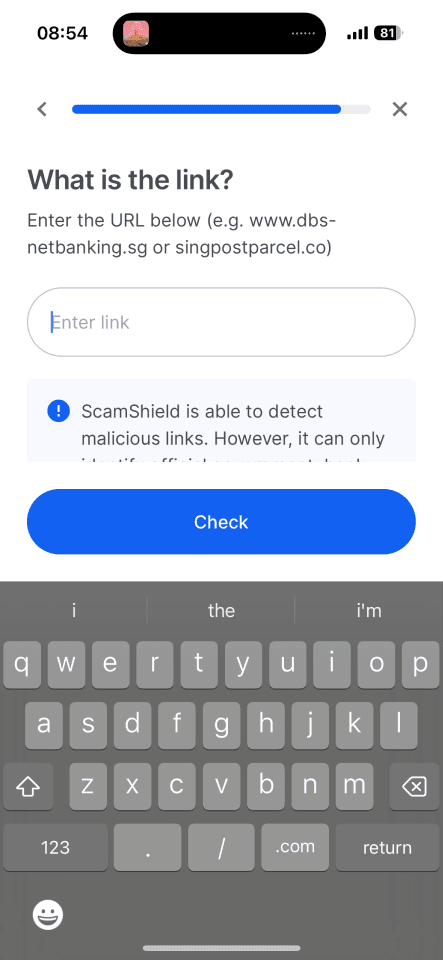

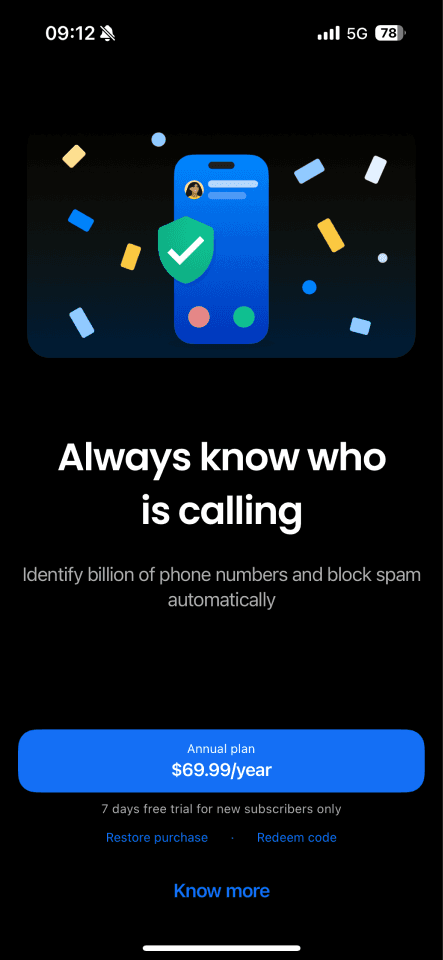

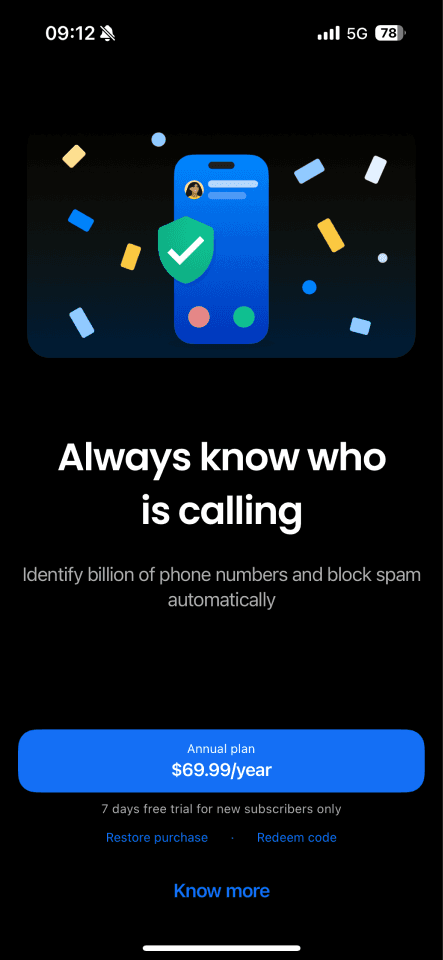

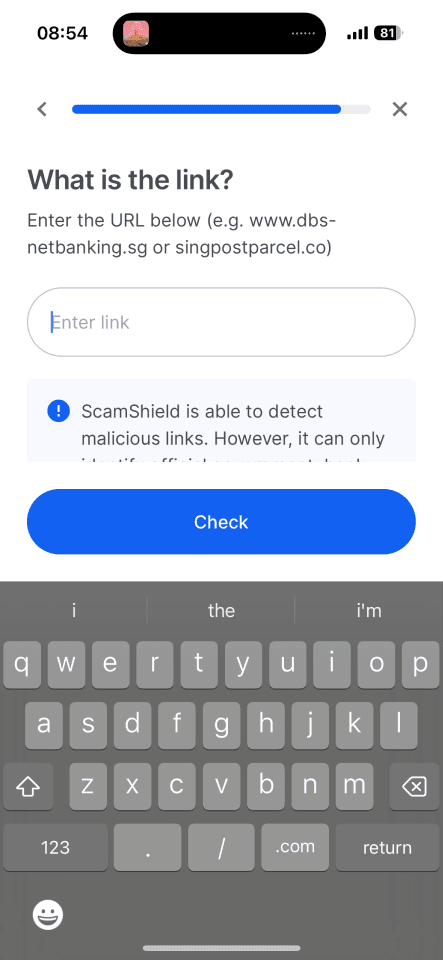

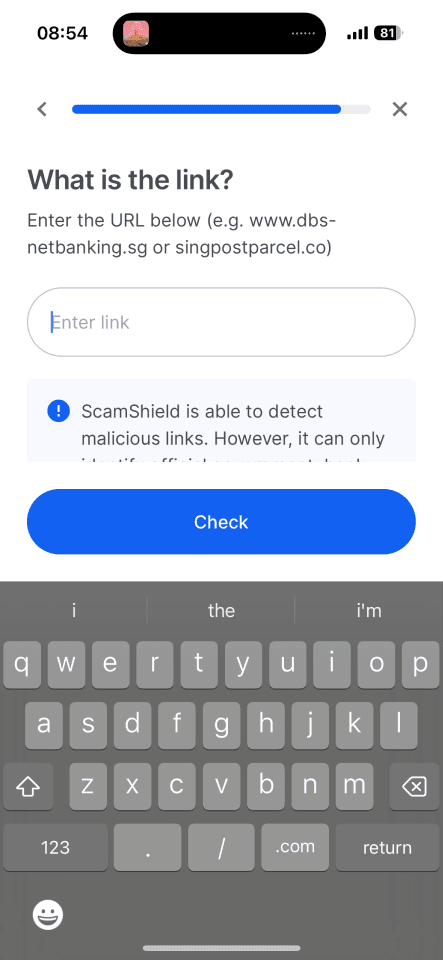

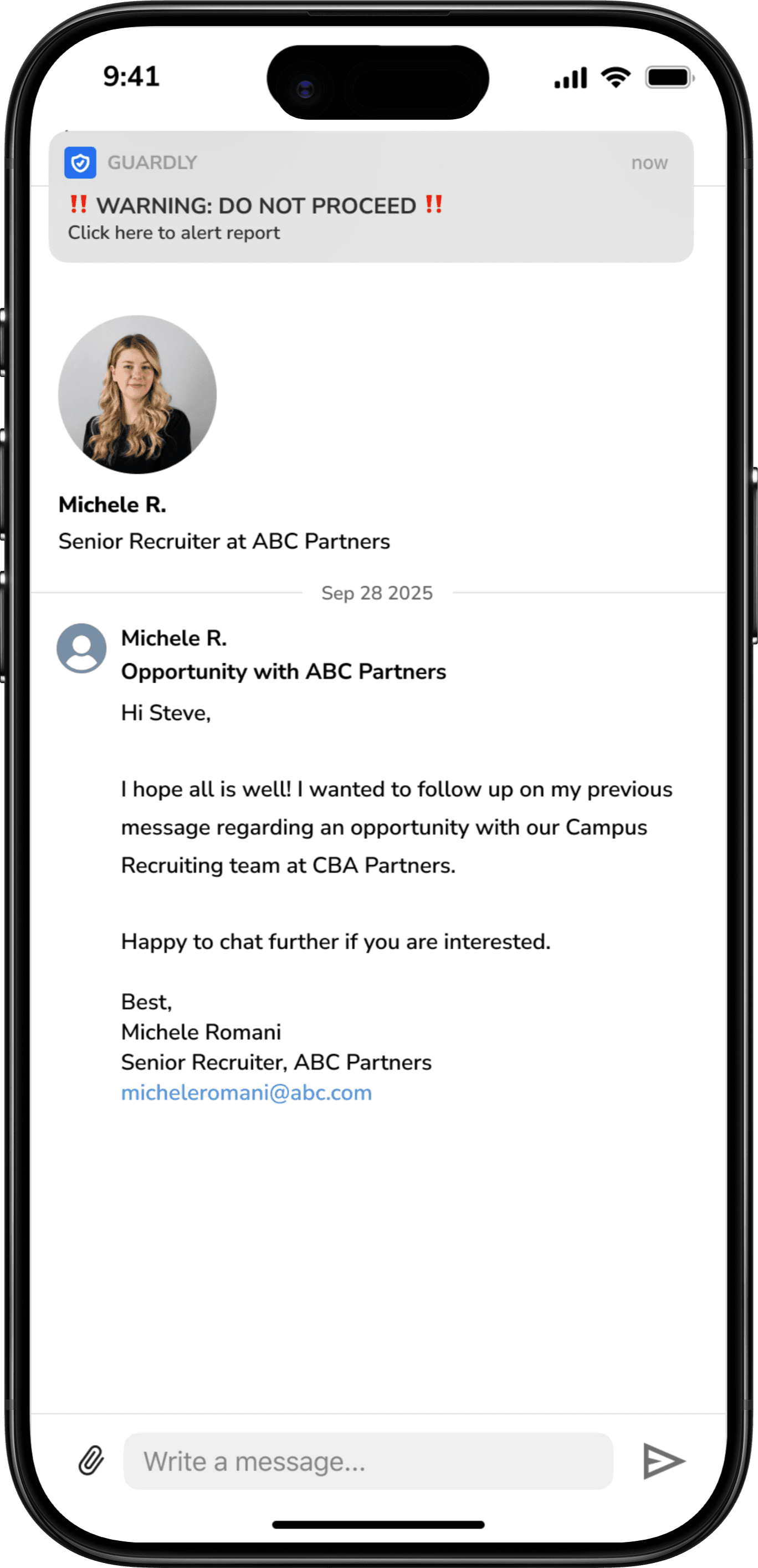

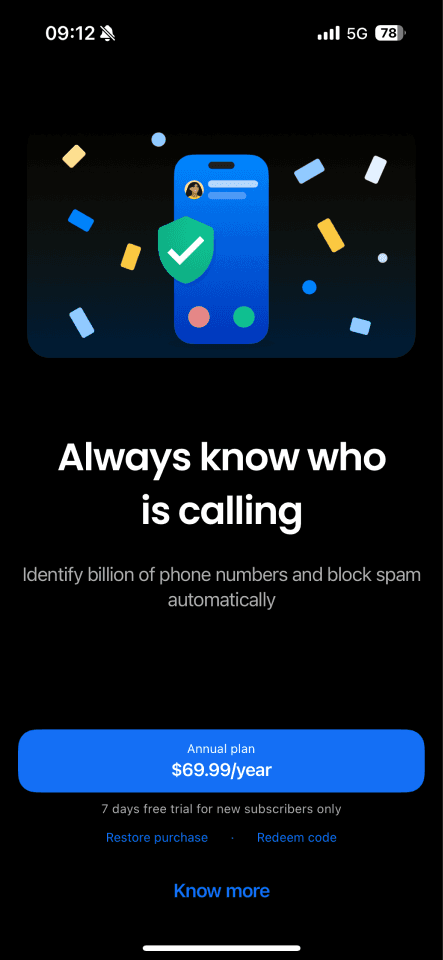

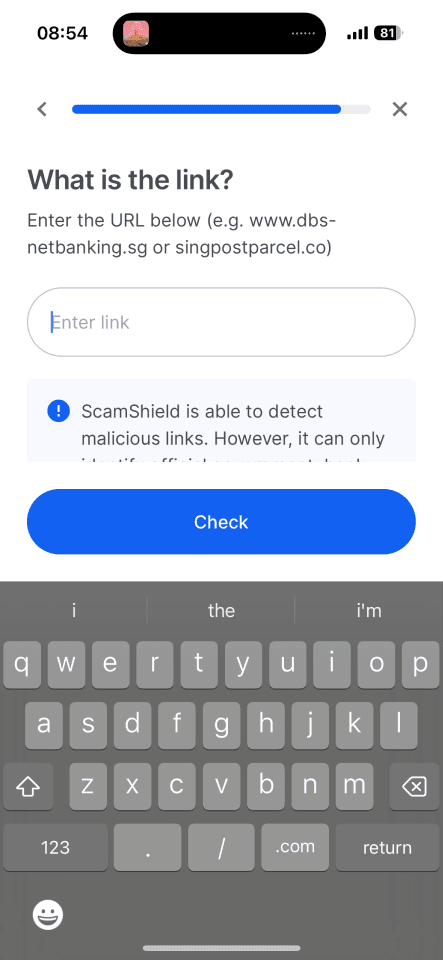

COMPETITOR ANALYSIS

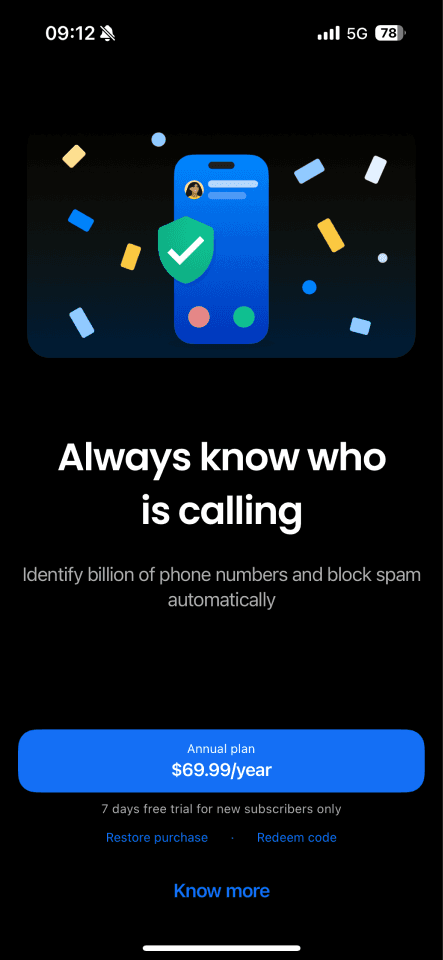

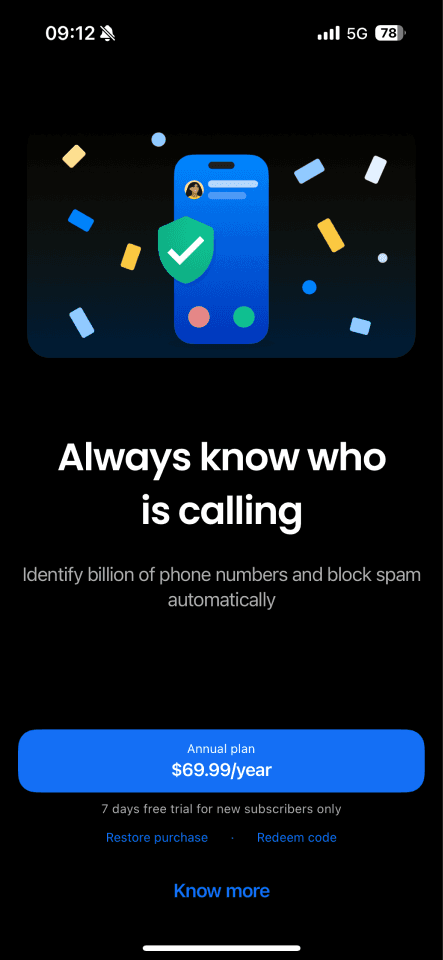

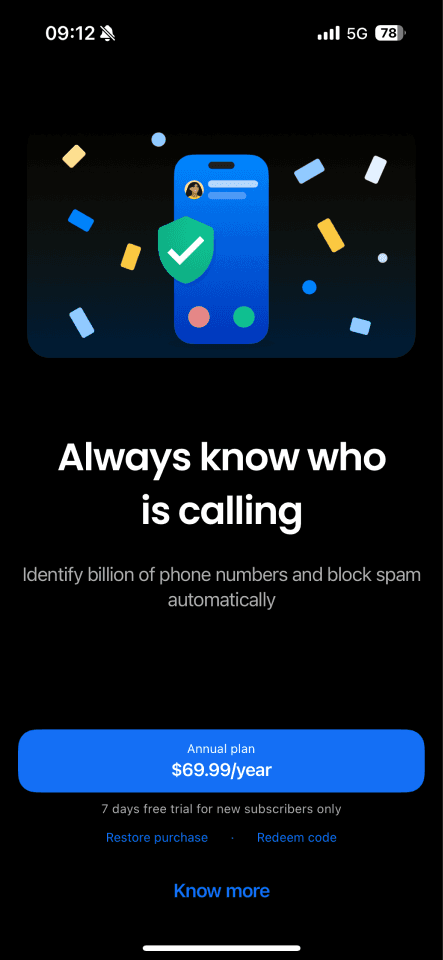

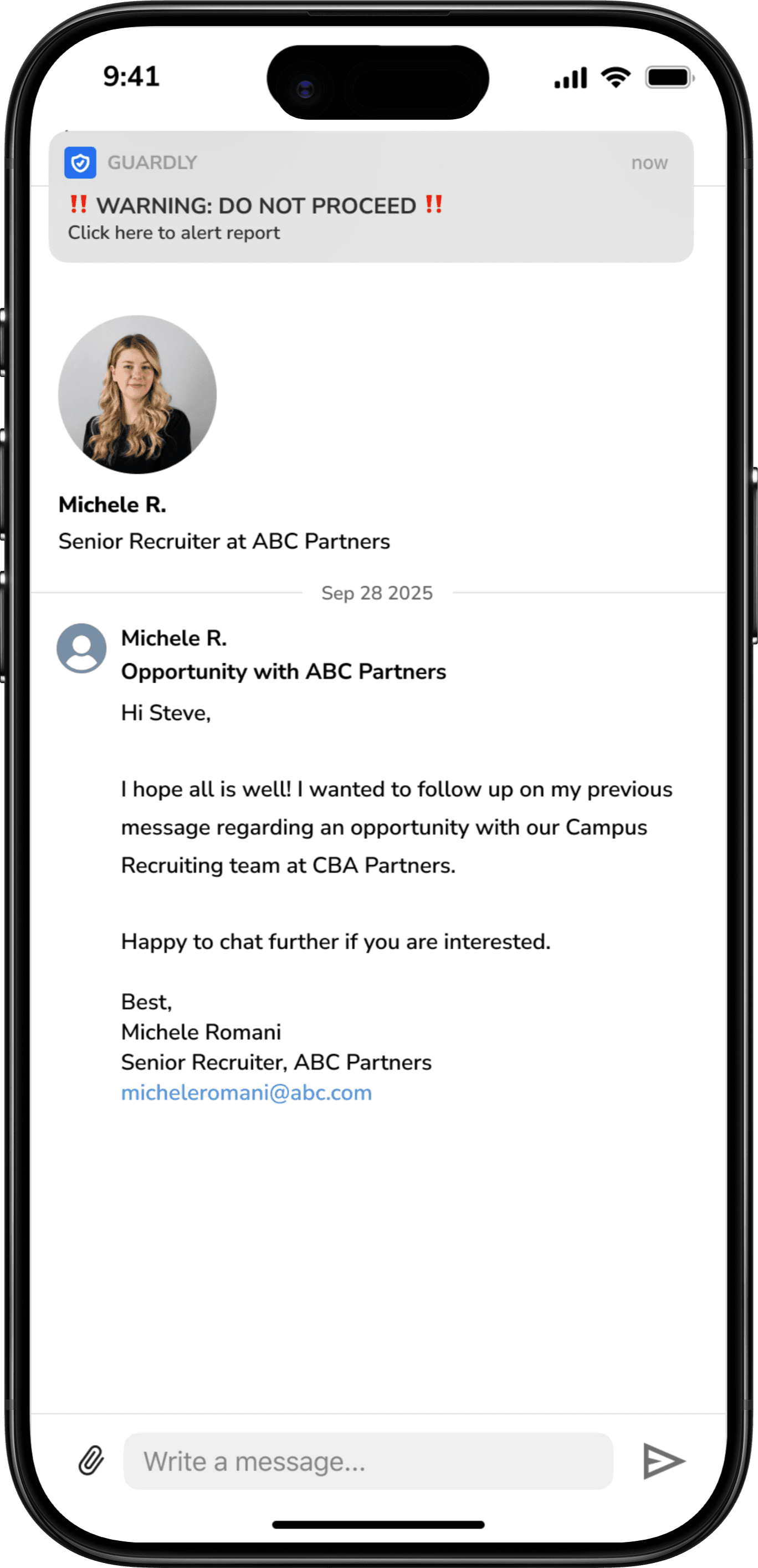

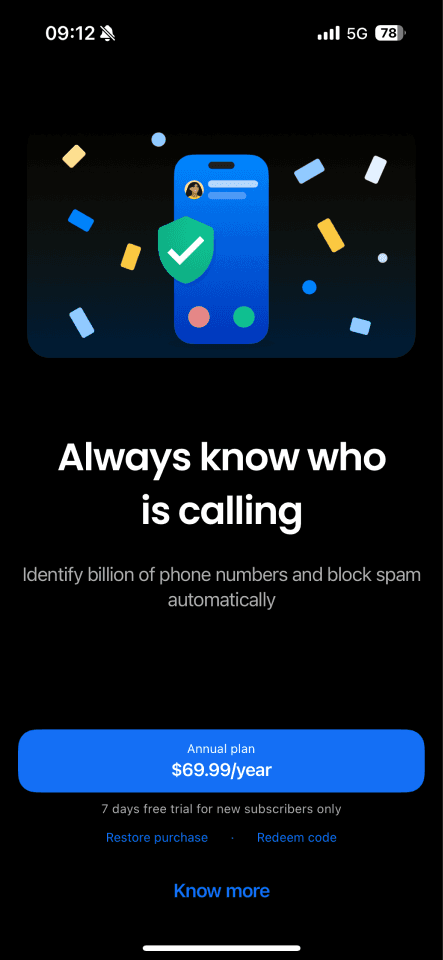

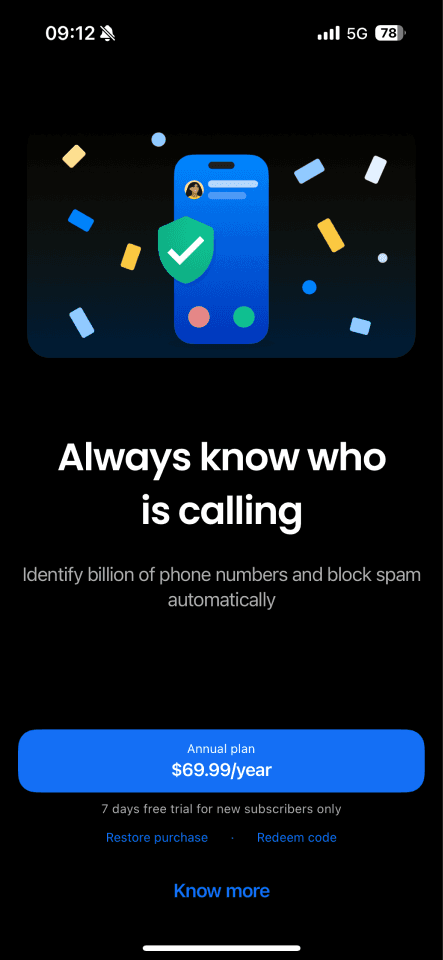

As our research progressed, we looked at existing scam protection tools such as Scam Shield and Truecaller. These products demonstrated how effective real-time intervention can be when scam detection is built directly into communication channels.

This made me think: “Why doesn’t this exist for online platforms - where scams are increasingly common?”

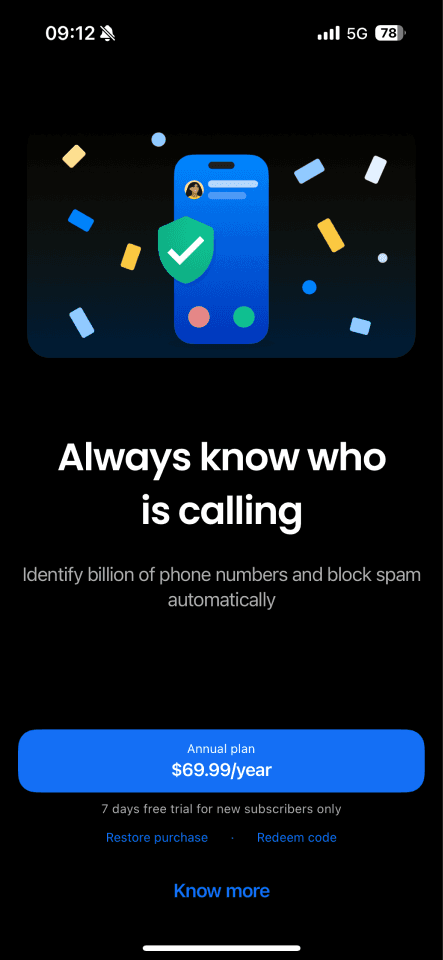

Scam Shield - Government backed

True Caller - Community based

Where it falls short

Reactive by design, protection often happens after exposure rather than before action

Only available for phone calls and SMS

Minimal learning and reflection, flags scams without helping users understand why or build long-term awareness

What it does well

Government-backed, signalling strong credibility for a safety product.

Grounded in real-world scam behaviour: Ability to check links, numbers and messages

Most protection happens automatically in the background, reducing the cognitive load required from users.

APPROACHING A SOLUTION

Creating just enough friction to stop scams, while building long-term awareness

Under tight time constraints, we ran a Crazy 8s ideation session to rapidly explore solution directions. Using dot-voting, the team prioritised ideas that best aligned with our research insights and design principles. This allowed us to converge quickly on a focused, feasible solution.

Opportunities I identified

Timely intervention is key

We should introduce friction at the moment of decision, not reactively.

Education through context

We should go beyond flagging scams by helping users understand why something is risky, building long-term scam awareness and confidence.

Expand to social media and online platforms

We should extend scam prevention into social media and online platforms where scams increasingly occur, but protections are limited.

REFRAMING THE HMW

Based on our research and interview, we reframed the design challengre to focus on when the scams occur, not just what they look like

To ground this reframe, we created personas and mapped the user journey to better empathize with our users.

Full research available on our Figjam board

FINAL SOLUTION

Job scams happens to more people than you think. Right now, no seamless tool helps job seekers verify opportunities as they browse. That gap leaves young Australians exposed — and it’s what our solution tackles.This made me think: “Why doesn’t this exist for online platforms - where scams are increasingly common?”

Trust building & Platform connection

Intentional friction at point of risk

Learning through real-world practice

Key learnings

How to make confident trade-offs without overthinking

Working fast (and sleep-deprived), I learned to synthesis data quickly, communicate decisively and prioritize under pressure

Teamwork really does make the dream work. With only 48 hours and a team of five, this designathon became a crash course in collaboration under pressure — and a reminder of how much I enjoy working collaboratively. This project reinforced my interest in designing systems that support users during emotionally vulnerable moments, where thoughtful UX decisions can make a real difference.

What I'd do with more time

Test with real job seeker - across different age group and level of digital literacy to validate the moment-based intervention

Friction without discouraging users - refine warning language and tone without causing fear or disengagement

Expand to advertisement scams - Extend the solution to address scams across border online platforms

COMPETITOR ANALYSIS

As our research progressed, we looked at existing scam protection tools such as Scam Shield and Truecaller. These products demonstrated how effective real-time intervention can be when scam detection is built directly into communication channels.

This made me think: “Why doesn’t this exist for online platforms - where scams are increasingly common?”

Scam Shield - Government backed

What it does well

Government-backed, signalling strong credibility for a safety product.

Grounded in real-world scam behaviour: Ability to check links, numbers and messages

Most protection happens automatically in the background, reducing the cognitive load required from users.

True Caller - Community based

Where it falls short

Reactive by design, protection often happens after exposure rather than before action

Only available for phone calls and SMS

Minimal learning and reflection, flags scams without helping users understand why or build long-term awareness

TALKING TO OUR USERS

Interviews with 25 young graduates revealed a pattern of overconfidence and vulnerability during scam encounters.

To dig deeper into these patterns, we conducted semi-structured interviews with 25 young graduates and early-career participants. We synthesised responses using an affinity diagram to cluster our findings.

[01] Overconfidence increases vulnerability

Many users, particularly younger job seekers, believed they were unlikely to fall for scams because they “grew up with the internet.” Despite this confidence, all interview participants are scam victims or knew someone who had been scammed.

[02] Intervention is often too late

Most existing solutions focus on education, policy, or content labeling, which typically occur before or after a risky interaction — not at the moment a user is deciding whether to engage. Cognitive overload and emotional decision-making mean critical thinking is often bypassed

[03] Job Scams are often the most legitimate looking

Unlike obvious scams, job-related scams often mirror real recruitment processes. Interviews, offer letters, and professional language build trust. The scam typically reveals itself during onboarding or payroll setup, when sensitive information is requested

[04] Scams are ignored, not reported

Reporting scams often felt pointless — once a report was submitted, users received no feedback or visibility into what happened next. Over time, this lack of transparency discouraged action, allowing scams to be ignored and repeated rather than actively addressed.

Opportunities I identified

Timely intervention is key

We should introduce friction at the moment of decision, not reactively.

Education through context

We should go beyond flagging scams by helping users understand why something is risky, building long-term scam awareness and confidence.

Expand to social media and online platforms

We should extend scam prevention into social media and online platforms where scams increasingly occur, but protections are limited.

APPROACHING A SOLUTION

Creating just enough friction to stop scams, while building long-term awareness

Under tight time constraints, we ran a Crazy 8s ideation session to rapidly explore solution directions. Using dot-voting, the team prioritised ideas that best aligned with our research insights and design principles. This allowed us to converge quickly on a focused, feasible solution.

FINAL SOLUTION

Job scams happens to more people than you think. Right now, no seamless tool helps job seekers verify opportunities as they browse. That gap leaves young Australians exposed — and it’s what our solution tackles.This made me think: “Why doesn’t this exist for online platforms - where scams are increasingly common?”

Trust building & Platform connection

Intentional friction at point of risk

Learning through real-world practice

REFRAMING THE HMW

Based on our research and interview, we reframed the design challengre to focus on when the scams occur, not just what they look like

To ground this reframe, we created personas and mapped the user journey to better empathize with our users.

Opportunities I identified

Timely intervention is key

We should introduce friction at the moment of decision, not reactively.

Education through context

We should go beyond flagging scams by helping users understand why something is risky, building long-term scam awareness and confidence.

Expand to social media and online platforms

We should extend scam prevention into social media and online platforms where scams increasingly occur, but protections are limited.

REFRAMING THE HMW

Based on our research and interview, we reframed the design challengre to focus on when the scams occur, not just what they look like

To ground this reframe, we created personas and mapped the user journey to better empathize with our users.

Full research available on our Figjam board

APPROACHING A SOLUTION

Creating just enough friction to stop scams, while building long-term awareness

Under tight time constraints, we ran a Crazy 8s ideation session to rapidly explore solution directions. Using dot-voting, the team prioritised ideas that best aligned with our research insights and design principles. This allowed us to converge quickly on a focused, feasible solution.

FINAL SOLUTION

Job scams happens to more people than you think. Right now, no seamless tool helps job seekers verify opportunities as they browse. That gap leaves young Australians exposed — and it’s what our solution tackles.This made me think: “Why doesn’t this exist for online platforms - where scams are increasingly common?”

Trust building & Platform connection

Intentional friction at point of risk

Learning through real-world practice

Key learnings

How to make confident trade-offs without overthinking

Working fast (and sleep-deprived), I learned to synthesis data quickly, communicate decisively and prioritize under pressure

Teamwork really does make the dream work. With only 48 hours and a team of five, this designathon became a crash course in collaboration under pressure — and a reminder of how much I enjoy working collaboratively. This project reinforced my interest in designing systems that support users during emotionally vulnerable moments, where thoughtful UX decisions can make a real difference.

What I'd do with more time

Test with real job seeker - across different age group and level of digital literacy to validate the moment-based intervention

Friction without discouraging users - refine warning language and tone without causing disengagement or panic

Expand to advertisement scams - Extend the solution to address scams across border online platforms

Guardly

A Scam-detection and education feature embedded within LinkedIn

INTRODUCTION

Guardly is a UX-led product developed during a 48-hour designathon, exploring how moment-based interventions can support job seekers at the point where scams are most likely to occur.

MY ROLE

I kept everyone aligned under tight time constraints by prioritising work and coordinating parallel workstreams. I contribute to the UX research and problem-definition phase, including framing the core challenge, sourcing supporting data, and shaping user interview questions.

I also owned the product narrative and final presentation to the judging panel, defining the story and communicating Guardly’s value and feasibility.

INTRODUCTION

Guardly is a UX-led product developed during a 48-hour designathon, exploring how moment-based interventions can support job seekers at the point where scams are most likely to occur.

[Project Scope]

Team of 5

[Role]

UX Designer - Product Manger

[Timeline]

48 hours designathon

View prototype

PROBLEM DISCOVERY

Job Scams look real and they catch people at their moment vunerable

Job scams no longer look suspicious. They look like real opportunities — complete with polished job listings, interviews, and official-looking offer letters. The danger often appears late in the process, during payroll onboarding, when job seekers are asked to share personal identification and bank details.

This makes scams especially effective during the job search, a time filled with urgency, hope, and emotional pressure. Many people are confident they won’t fall for scams until they find themselves caught in one.

This sparked a question -

“How might we empower users to confidently spot and report job scams so they can protect their personal information from online predators?”

PROBLEM DISCOVERY

Job Scams look real and they catch people at their moment vunerable

Job scams no longer look suspicious. They look like real opportunities — complete with polished job listings, interviews, and official-looking offer letters. The danger often appears late in the process, during payroll onboarding, when job seekers are asked to share personal identification and bank details.

This makes scams especially effective during the job search, a time filled with urgency, hope, and emotional pressure. Many people are confident they won’t fall for scams until they find themselves caught in one. This sparked a question -

“How might we empower users to confidently spot and report job scams so they can protect their personal information from online predators?”

Our Solution - Guardly

A moment-based scam detection and education experience for job seekers

Timely warning and clear guidance right when it matters most

Stays out of the way — Once connected with Linkedin, it runs quietly in the background while users browse jobs and messages.

Steps in when risk is highest — surfacing calm warnings only when suspicious patterns appear.

Helps users make sense of the risk — breaking down what feels off so users can decide what to do next with confidence.

Empowers users to take action — Report suspected scams and always closing the loop with clear follow-up, so reporting never feels pointless.

The Process

STARTING POINT

With 48 hours on the clock, we did a deep dive into the world of online scams — a space that turned out to be far more complex, emotional, and human than we expected.

While the brief broadly explored online safety, early research revealed many forms of digital harm. We chose to narrow our focus to job scams, where financial loss and emotional distress are often inflicted during the urgency, hope, and pressure of the job search.

DISCOVERING THE PROBLEM SPACE

In Australia alone, $13.5 million was lost to job scams in 2025, often during moments of urgency, hope, and emotional vulnerability.

Our research revealed that most existing scam prevention relies on policy, broad education, or platform-level signals such as labels and warnings. These interventions often live outside the moment of decision — appearing in classrooms, public campaigns, or after harm has already occurred — rather than when someone is about to click, reply, or share personal details.

We also found that heavy-handed regulation or platform bans can trigger resistance or feelings of censorship, especially when users don’t understand why something is being blocked. Even when warnings are present, they are often passive and non-actionable, leaving users unsure of what to do next.

In fast, emotionally charged online environments, urgency, optimism, and cognitive overload make it difficult for people to slow down and assess risk — precisely at the moments when caution matters most.

TALKING TO OUR USERS

Interviews with 25 young graduates revealed a pattern of overconfidence and vulnerability during scam encounters.

To dig deeper into these patterns, we conducted semi-structured interviews with 25 young graduates and early-career participants. We synthesised responses using an affinity diagram to cluster our findings.

[01] Overconfidence increases vulnerability

Many users, particularly younger job seekers, believed they were unlikely to fall for scams because they “grew up with the internet.” Despite this confidence, all interview participants are scam victims or knew someone who had been scammed.

[02] Intervention is often too late

Most existing solutions focus on education, policy, or content labeling, which typically occur before or after a risky interaction — not at the moment a user is deciding whether to engage. Cognitive overload and emotional decision-making mean critical thinking is often bypassed

[03] Job Scams are often the most legitimate looking

Unlike obvious scams, job-related scams often mirror real recruitment processes. Interviews, offer letters, and professional language build trust. The scam typically reveals itself during onboarding or payroll setup, when sensitive information is requested

[04] Scams are ignored, not reported

Reporting scams often felt pointless — once a report was submitted, users received no feedback or visibility into what happened next. Over time, this lack of transparency discouraged action, allowing scams to be ignored and repeated rather than actively addressed.

COMPETITOR ANALYSIS

As our research progressed, we looked at existing scam protection tools such as Scam Shield and Truecaller. These products demonstrated how effective real-time intervention can be when scam detection is built directly into communication channels.

This made me think: “Why doesn’t this exist for online platforms - where scams are increasingly common?”

Scam Shield - Government backed

True Caller - Community based

Where it falls short

Reactive by design, protection often happens after exposure rather than before action

Only available for phone calls and SMS

Minimal learning and reflection, flags scams without helping users understand why or build long-term awareness

What it does well

Government-backed, signalling strong credibility for a safety product.

Grounded in real-world scam behaviour: Ability to check links, numbers and messages

Most protection happens automatically in the background, reducing the cognitive load required from users.

APPROACHING A SOLUTION

Creating just enough friction to stop scams, while building long-term awareness

Under tight time constraints, we ran a Crazy 8s ideation session to rapidly explore solution directions. Using dot-voting, the team prioritised ideas that best aligned with our research insights and design principles. This allowed us to converge quickly on a focused, feasible solution.

Opportunities I identified

Timely intervention is key

We should introduce friction at the moment of decision, not reactively.

Education through context

We should go beyond flagging scams by helping users understand why something is risky, building long-term scam awareness and confidence.

Expand to social media and online platforms

We should extend scam prevention into social media and online platforms where scams increasingly occur, but protections are limited.

REFRAMING THE HMW

Based on our research and interview, we reframed the design challengre to focus on when the scams occur, not just what they look like

To ground this reframe, we created personas and mapped the user journey to better empathize with our users.

Full research available on our Figjam board

FINAL SOLUTION

Job scams happens to more people than you think. Right now, no seamless tool helps job seekers verify opportunities as they browse. That gap leaves young Australians exposed — and it’s what our solution tackles.This made me think: “Why doesn’t this exist for online platforms - where scams are increasingly common?”

Trust building & Platform connection

Intentional friction at point of risk

Learning through real-world practice

Key learnings

How to make confident trade-offs without overthinking

Working fast (and sleep-deprived), I learned to synthesis data quickly, communicate decisively and prioritize under pressure

Teamwork really does make the dream work. With only 48 hours and a team of five, this designathon became a crash course in collaboration under pressure — and a reminder of how much I enjoy working collaboratively. This project reinforced my interest in designing systems that support users during emotionally vulnerable moments, where thoughtful UX decisions can make a real difference.

What I'd do with more time

Test with real job seeker - across different age group and level of digital literacy to validate the moment-based intervention

Friction without discouraging users - refine warning language and tone without causing fear or disengagement

Expand to advertisement scams - Extend the solution to address scams across border online platforms

COMPETITOR ANALYSIS

As our research progressed, we looked at existing scam protection tools such as Scam Shield and Truecaller. These products demonstrated how effective real-time intervention can be when scam detection is built directly into communication channels.

This made me think: “Why doesn’t this exist for online platforms - where scams are increasingly common?”

Scam Shield - Government backed

What it does well

Government-backed, signalling strong credibility for a safety product.

Grounded in real-world scam behaviour: Ability to check links, numbers and messages

Most protection happens automatically in the background, reducing the cognitive load required from users.

True Caller - Community based

Where it falls short

Reactive by design, protection often happens after exposure rather than before action

Only available for phone calls and SMS

Minimal learning and reflection, flags scams without helping users understand why or build long-term awareness

TALKING TO OUR USERS

Interviews with 25 young graduates revealed a pattern of overconfidence and vulnerability during scam encounters.

To dig deeper into these patterns, we conducted semi-structured interviews with 25 young graduates and early-career participants. We synthesised responses using an affinity diagram to cluster our findings.

[01] Overconfidence increases vulnerability

Many users, particularly younger job seekers, believed they were unlikely to fall for scams because they “grew up with the internet.” Despite this confidence, all interview participants are scam victims or knew someone who had been scammed.

[02] Intervention is often too late

Most existing solutions focus on education, policy, or content labeling, which typically occur before or after a risky interaction — not at the moment a user is deciding whether to engage. Cognitive overload and emotional decision-making mean critical thinking is often bypassed

[03] Job Scams are often the most legitimate looking

Unlike obvious scams, job-related scams often mirror real recruitment processes. Interviews, offer letters, and professional language build trust. The scam typically reveals itself during onboarding or payroll setup, when sensitive information is requested

[04] Scams are ignored, not reported

Reporting scams often felt pointless — once a report was submitted, users received no feedback or visibility into what happened next. Over time, this lack of transparency discouraged action, allowing scams to be ignored and repeated rather than actively addressed.

Opportunities I identified

Timely intervention is key

We should introduce friction at the moment of decision, not reactively.

Education through context

We should go beyond flagging scams by helping users understand why something is risky, building long-term scam awareness and confidence.

Expand to social media and online platforms

We should extend scam prevention into social media and online platforms where scams increasingly occur, but protections are limited.

APPROACHING A SOLUTION

Creating just enough friction to stop scams, while building long-term awareness

Under tight time constraints, we ran a Crazy 8s ideation session to rapidly explore solution directions. Using dot-voting, the team prioritised ideas that best aligned with our research insights and design principles. This allowed us to converge quickly on a focused, feasible solution.

FINAL SOLUTION

Job scams happens to more people than you think. Right now, no seamless tool helps job seekers verify opportunities as they browse. That gap leaves young Australians exposed — and it’s what our solution tackles.This made me think: “Why doesn’t this exist for online platforms - where scams are increasingly common?”

Trust building & Platform connection

Intentional friction at point of risk

Learning through real-world practice

REFRAMING THE HMW

Based on our research and interview, we reframed the design challengre to focus on when the scams occur, not just what they look like

To ground this reframe, we created personas and mapped the user journey to better empathize with our users.

Opportunities I identified

Timely intervention is key

We should introduce friction at the moment of decision, not reactively.

Education through context

We should go beyond flagging scams by helping users understand why something is risky, building long-term scam awareness and confidence.

Expand to social media and online platforms

We should extend scam prevention into social media and online platforms where scams increasingly occur, but protections are limited.

REFRAMING THE HMW

Based on our research and interview, we reframed the design challengre to focus on when the scams occur, not just what they look like

To ground this reframe, we created personas and mapped the user journey to better empathize with our users.

Full research available on our Figjam board

APPROACHING A SOLUTION

Creating just enough friction to stop scams, while building long-term awareness

Under tight time constraints, we ran a Crazy 8s ideation session to rapidly explore solution directions. Using dot-voting, the team prioritised ideas that best aligned with our research insights and design principles. This allowed us to converge quickly on a focused, feasible solution.

FINAL SOLUTION

Job scams happens to more people than you think. Right now, no seamless tool helps job seekers verify opportunities as they browse. That gap leaves young Australians exposed — and it’s what our solution tackles.This made me think: “Why doesn’t this exist for online platforms - where scams are increasingly common?”

Trust building & Platform connection

Intentional friction at point of risk

Learning through real-world practice

Key learnings

How to make confident trade-offs without overthinking

Working fast (and sleep-deprived), I learned to synthesis data quickly, communicate decisively and prioritize under pressure

Teamwork really does make the dream work. With only 48 hours and a team of five, this designathon became a crash course in collaboration under pressure — and a reminder of how much I enjoy working collaboratively. This project reinforced my interest in designing systems that support users during emotionally vulnerable moments, where thoughtful UX decisions can make a real difference.

What I'd do with more time

Test with real job seeker - across different age group and level of digital literacy to validate the moment-based intervention

Friction without discouraging users - refine warning language and tone without causing disengagement or panic

Expand to advertisement scams - Extend the solution to address scams across border online platforms

Guardly

A Scam-detection and education feature embedded within LinkedIn

INTRODUCTION

Guardly is a UX-led product developed during a 48-hour designathon, exploring how moment-based interventions can support job seekers at the point where scams are most likely to occur.

MY ROLE

I kept everyone aligned under tight time constraints by prioritising work and coordinating parallel workstreams. I contribute to the UX research and problem-definition phase, including framing the core challenge, sourcing supporting data, and shaping user interview questions.

I also owned the product narrative and final presentation to the judging panel, defining the story and communicating Guardly’s value and feasibility.

INTRODUCTION

Guardly is a UX-led product developed during a 48-hour designathon, exploring how moment-based interventions can support job seekers at the point where scams are most likely to occur.

[Project Scope]

Team of 5

[Role]

UX Designer - Product Manger

[Timeline]

48 hours designathon

View prototype

PROBLEM DISCOVERY

Job Scams look real and they catch people at their moment vunerable

Job scams no longer look suspicious. They look like real opportunities — complete with polished job listings, interviews, and official-looking offer letters. The danger often appears late in the process, during payroll onboarding, when job seekers are asked to share personal identification and bank details.

This makes scams especially effective during the job search, a time filled with urgency, hope, and emotional pressure. Many people are confident they won’t fall for scams until they find themselves caught in one.

This sparked a question -

“How might we empower users to confidently spot and report job scams so they can protect their personal information from online predators?”

PROBLEM DISCOVERY

Job Scams look real and they catch people at their moment vunerable

Job scams no longer look suspicious. They look like real opportunities — complete with polished job listings, interviews, and official-looking offer letters. The danger often appears late in the process, during payroll onboarding, when job seekers are asked to share personal identification and bank details.

This makes scams especially effective during the job search, a time filled with urgency, hope, and emotional pressure. Many people are confident they won’t fall for scams until they find themselves caught in one. This sparked a question -

“How might we empower users to confidently spot and report job scams so they can protect their personal information from online predators?”

Our Solution - Guardly

A moment-based scam detection and education experience for job seekers

Timely warning and clear guidance right when it matters most

Stays out of the way — Once connected with Linkedin, it runs quietly in the background while users browse jobs and messages.

Steps in when risk is highest — surfacing calm warnings only when suspicious patterns appear.

Helps users make sense of the risk — breaking down what feels off so users can decide what to do next with confidence.

Empowers users to take action — Report suspected scams and always closing the loop with clear follow-up, so reporting never feels pointless.

The Process

STARTING POINT

With 48 hours on the clock, we did a deep dive into the world of online scams — a space that turned out to be far more complex, emotional, and human than we expected.

While the brief broadly explored online safety, early research revealed many forms of digital harm. We chose to narrow our focus to job scams, where financial loss and emotional distress are often inflicted during the urgency, hope, and pressure of the job search.

DISCOVERING THE PROBLEM SPACE

In Australia alone, $13.5 million was lost to job scams in 2025, often during moments of urgency, hope, and emotional vulnerability.

Our research revealed that most existing scam prevention relies on policy, broad education, or platform-level signals such as labels and warnings. These interventions often live outside the moment of decision — appearing in classrooms, public campaigns, or after harm has already occurred — rather than when someone is about to click, reply, or share personal details.

We also found that heavy-handed regulation or platform bans can trigger resistance or feelings of censorship, especially when users don’t understand why something is being blocked. Even when warnings are present, they are often passive and non-actionable, leaving users unsure of what to do next.

In fast, emotionally charged online environments, urgency, optimism, and cognitive overload make it difficult for people to slow down and assess risk — precisely at the moments when caution matters most.

TALKING TO OUR USERS

Interviews with 25 young graduates revealed a pattern of overconfidence and vulnerability during scam encounters.

To dig deeper into these patterns, we conducted semi-structured interviews with 25 young graduates and early-career participants. We synthesised responses using an affinity diagram to cluster our findings.

[01] Overconfidence increases vulnerability

Many users, particularly younger job seekers, believed they were unlikely to fall for scams because they “grew up with the internet.” Despite this confidence, all interview participants are scam victims or knew someone who had been scammed.

[02] Intervention is often too late

Most existing solutions focus on education, policy, or content labeling, which typically occur before or after a risky interaction — not at the moment a user is deciding whether to engage. Cognitive overload and emotional decision-making mean critical thinking is often bypassed

[03] Job Scams are often the most legitimate looking

Unlike obvious scams, job-related scams often mirror real recruitment processes. Interviews, offer letters, and professional language build trust. The scam typically reveals itself during onboarding or payroll setup, when sensitive information is requested

[04] Scams are ignored, not reported

Reporting scams often felt pointless — once a report was submitted, users received no feedback or visibility into what happened next. Over time, this lack of transparency discouraged action, allowing scams to be ignored and repeated rather than actively addressed.

COMPETITOR ANALYSIS

As our research progressed, we looked at existing scam protection tools such as Scam Shield and Truecaller. These products demonstrated how effective real-time intervention can be when scam detection is built directly into communication channels.

This made me think: “Why doesn’t this exist for online platforms - where scams are increasingly common?”

Scam Shield - Government backed

True Caller - Community based

Where it falls short

Reactive by design, protection often happens after exposure rather than before action

Only available for phone calls and SMS

Minimal learning and reflection, flags scams without helping users understand why or build long-term awareness

What it does well

Government-backed, signalling strong credibility for a safety product.

Grounded in real-world scam behaviour: Ability to check links, numbers and messages

Most protection happens automatically in the background, reducing the cognitive load required from users.

APPROACHING A SOLUTION

Creating just enough friction to stop scams, while building long-term awareness

Under tight time constraints, we ran a Crazy 8s ideation session to rapidly explore solution directions. Using dot-voting, the team prioritised ideas that best aligned with our research insights and design principles. This allowed us to converge quickly on a focused, feasible solution.

Opportunities I identified

Timely intervention is key

We should introduce friction at the moment of decision, not reactively.

Education through context

We should go beyond flagging scams by helping users understand why something is risky, building long-term scam awareness and confidence.

Expand to social media and online platforms

We should extend scam prevention into social media and online platforms where scams increasingly occur, but protections are limited.

REFRAMING THE HMW

Based on our research and interview, we reframed the design challengre to focus on when the scams occur, not just what they look like

To ground this reframe, we created personas and mapped the user journey to better empathize with our users.

Full research available on our Figjam board

FINAL SOLUTION

Job scams happens to more people than you think. Right now, no seamless tool helps job seekers verify opportunities as they browse. That gap leaves young Australians exposed — and it’s what our solution tackles.This made me think: “Why doesn’t this exist for online platforms - where scams are increasingly common?”

Trust building & Platform connection

Intentional friction at point of risk

Learning through real-world practice

Key learnings

How to make confident trade-offs without overthinking

Working fast (and sleep-deprived), I learned to synthesis data quickly, communicate decisively and prioritize under pressure

Teamwork really does make the dream work. With only 48 hours and a team of five, this designathon became a crash course in collaboration under pressure — and a reminder of how much I enjoy working collaboratively. This project reinforced my interest in designing systems that support users during emotionally vulnerable moments, where thoughtful UX decisions can make a real difference.

What I'd do with more time

Test with real job seeker - across different age group and level of digital literacy to validate the moment-based intervention

Friction without discouraging users - refine warning language and tone without causing fear or disengagement

Expand to advertisement scams - Extend the solution to address scams across border online platforms

COMPETITOR ANALYSIS

As our research progressed, we looked at existing scam protection tools such as Scam Shield and Truecaller. These products demonstrated how effective real-time intervention can be when scam detection is built directly into communication channels.

This made me think: “Why doesn’t this exist for online platforms - where scams are increasingly common?”

Scam Shield - Government backed

What it does well

Government-backed, signalling strong credibility for a safety product.

Grounded in real-world scam behaviour: Ability to check links, numbers and messages

Most protection happens automatically in the background, reducing the cognitive load required from users.

True Caller - Community based

Where it falls short

Reactive by design, protection often happens after exposure rather than before action

Only available for phone calls and SMS

Minimal learning and reflection, flags scams without helping users understand why or build long-term awareness

TALKING TO OUR USERS

Interviews with 25 young graduates revealed a pattern of overconfidence and vulnerability during scam encounters.

To dig deeper into these patterns, we conducted semi-structured interviews with 25 young graduates and early-career participants. We synthesised responses using an affinity diagram to cluster our findings.

[01] Overconfidence increases vulnerability

Many users, particularly younger job seekers, believed they were unlikely to fall for scams because they “grew up with the internet.” Despite this confidence, all interview participants are scam victims or knew someone who had been scammed.

[02] Intervention is often too late

Most existing solutions focus on education, policy, or content labeling, which typically occur before or after a risky interaction — not at the moment a user is deciding whether to engage. Cognitive overload and emotional decision-making mean critical thinking is often bypassed

[03] Job Scams are often the most legitimate looking

Unlike obvious scams, job-related scams often mirror real recruitment processes. Interviews, offer letters, and professional language build trust. The scam typically reveals itself during onboarding or payroll setup, when sensitive information is requested

[04] Scams are ignored, not reported

Reporting scams often felt pointless — once a report was submitted, users received no feedback or visibility into what happened next. Over time, this lack of transparency discouraged action, allowing scams to be ignored and repeated rather than actively addressed.

Opportunities I identified

Timely intervention is key

We should introduce friction at the moment of decision, not reactively.

Education through context

We should go beyond flagging scams by helping users understand why something is risky, building long-term scam awareness and confidence.

Expand to social media and online platforms

We should extend scam prevention into social media and online platforms where scams increasingly occur, but protections are limited.

APPROACHING A SOLUTION

Creating just enough friction to stop scams, while building long-term awareness

Under tight time constraints, we ran a Crazy 8s ideation session to rapidly explore solution directions. Using dot-voting, the team prioritised ideas that best aligned with our research insights and design principles. This allowed us to converge quickly on a focused, feasible solution.

FINAL SOLUTION

Job scams happens to more people than you think. Right now, no seamless tool helps job seekers verify opportunities as they browse. That gap leaves young Australians exposed — and it’s what our solution tackles.This made me think: “Why doesn’t this exist for online platforms - where scams are increasingly common?”

Trust building & Platform connection

Intentional friction at point of risk

Learning through real-world practice

REFRAMING THE HMW

Based on our research and interview, we reframed the design challengre to focus on when the scams occur, not just what they look like

To ground this reframe, we created personas and mapped the user journey to better empathize with our users.

Opportunities I identified

Timely intervention is key

We should introduce friction at the moment of decision, not reactively.

Education through context

We should go beyond flagging scams by helping users understand why something is risky, building long-term scam awareness and confidence.

Expand to social media and online platforms

We should extend scam prevention into social media and online platforms where scams increasingly occur, but protections are limited.

REFRAMING THE HMW

Based on our research and interview, we reframed the design challengre to focus on when the scams occur, not just what they look like

To ground this reframe, we created personas and mapped the user journey to better empathize with our users.

Full research available on our Figjam board

APPROACHING A SOLUTION

Creating just enough friction to stop scams, while building long-term awareness

Under tight time constraints, we ran a Crazy 8s ideation session to rapidly explore solution directions. Using dot-voting, the team prioritised ideas that best aligned with our research insights and design principles. This allowed us to converge quickly on a focused, feasible solution.

FINAL SOLUTION

Job scams happens to more people than you think. Right now, no seamless tool helps job seekers verify opportunities as they browse. That gap leaves young Australians exposed — and it’s what our solution tackles.This made me think: “Why doesn’t this exist for online platforms - where scams are increasingly common?”

Trust building & Platform connection

Intentional friction at point of risk

Learning through real-world practice

Key learnings

How to make confident trade-offs without overthinking

Working fast (and sleep-deprived), I learned to synthesis data quickly, communicate decisively and prioritize under pressure

Teamwork really does make the dream work. With only 48 hours and a team of five, this designathon became a crash course in collaboration under pressure — and a reminder of how much I enjoy working collaboratively. This project reinforced my interest in designing systems that support users during emotionally vulnerable moments, where thoughtful UX decisions can make a real difference.